Adaptive Attacks: Learning to Evade Machine Learning-Based IDS

Table of Contents

Introduction

Intrusion Detection Systems (IDS) are one of the key cybersecurity controls that monitor and analyze network traffic to detect malicious activities and potential vulnerabilities. They are one of the oldest technical controls. Like every other technology, IDS have also gotten ML/AI-enabled, leveraging the capability of ML models to learn and adapt to the intricate patterns of network behavior, subsequently improving the detection accuracy and minimizing false positives in identifying intrusions. However, the adaptation of ML in IDS brings forth a problem: adaptive attacks – sophisticated attacks aiming to manipulate and bypass detection mechanisms. These attacks pose a formidable challenge, often mutating or camouflaging their properties to seamlessly blend with normal network traffic, thereby enabling them to evade detection. In this context, understanding ML adaptive attacks is critical for security professionals.

Understanding Intrusion Detection Systems

IDSs could be classified in: signature-based IDS and anomaly-based IDS. Signature-based IDS operate by comparing network traffic against a database of known attack signatures, thereby detecting known threats with high accuracy. In contrast, anomaly-based IDS establish a baseline of normal network behavior and alert administrators to any deviations from this norm, which might indicate novel, previously unknown threats.

Machine Learning-Based IDS utilizes machine learning models to discern patterns and anomalies in network traffic that might elude traditional IDS. These models are trained to recognize the complex behavioral patterns indicative of malicious activity, thus enabling the detection of threats that might not have predefined signatures. The integration of machine learning within IDS enhances detection capabilities and reduces false positives. The self-learning and adaptive nature of machine learning models allow them to continuously refine their understanding of network behaviors, thereby improving their ability to distinguish between legitimate and malicious activities.

Learning to Evade: How Adaptive Attacks Work

Exploiting Model Vulnerabilities

Several vulnerabilities in ML-Based IDS can be exploited by attackers to facilitate evasion. One prevalent vulnerability lies in the susceptibility of models to adversarial inputs, perturbations tailored to deceive the model into making incorrect predictions. Attackers, often employing techniques like model querying, can gather valuable information regarding the target model’s structure, parameters, and learned features, thereby gaining insights into crafting inputs that the model fails to classify correctly. This reconnaissance allows attackers to meticulously modify malicious payloads or network traffic patterns, ensuring that they resemble benign inputs to the model, thus evading detection while maintaining their damaging capabilities.

Evasion Techniques

Adaptive attackers deploy a variety of sophisticated evasion techniques designed to circumvent the detection mechanisms of machine-learning models. Model poisoning is one such technique wherein the attacker manipulates the training data to mislead the model during its learning phase, resulting in a compromised model that is unable to distinguish between benign and malicious activities accurately. Another notable technique is the crafting of adversarial examples, inputs that are intentionally perturbed to mislead the model while appearing unchanged to human observers. These adversarial examples can deceive the model into misclassifying malicious inputs as benign, allowing the attacker to infiltrate the network undetected. The growing arsenal of evasion techniques underscores the critical need for developing robust, resilient models and continually enhancing the defensive measures.

Countering Adaptive Attacks

Defensive Strategies

One of the forefront strategies to defend against adaptive attacks is to ensure the robustness of model training. This includes techniques like adversarial training, where the model is trained not just on regular examples but also on adversarial ones, effectively teaching it to recognize and counteract manipulated inputs. Regularly updating the model is equally crucial. As new evasion tactics emerge, continuously retraining the machine learning models with the latest data ensures they stay relevant and adept at detecting these evolving threats. Alongside the model itself, maintaing confidentiality and integrity of the training data is critical. If attackers compromise the data on which the model trains, the resultant model becomes an unwitting accomplice. Securing the training pipeline, ranging from data collection preprocessing to the actual model training needs to be a priority to prevent model poisoning and other related threats.

The Role of Research and Development

The ever-evolving nature of adaptive attacks demands a proactive research stance to anticipate and counter them effectively. Several notable research papers tackle this challenge. For instance, a recent study titled “Towards deep learning models resistant to adversarial attacks” [1] emphasizes the significance of crafting adversarial examples during training to bolster model resilience. Another work in [2] introduces the concept of model distillation as a potent defensive measure. Research in [3] delineates the underlying reasons for the susceptibility of deep learning models to adversarial attacks. This study was conducted based on [4], which explores the practical aspects of deploying adversarial defenses across diverse data modalities. In addition, a study [5] meticulously evaluates the resilience of neural networks in the face of concerted adversarial efforts, providing insights into potential vulnerabilities. Collectively, these research contributions underscore the critical role of academia and industry in collaboratively forging more secure and resilient ML models.

Future of IDS and Adaptive Attacks

Predicting Future Threats

The evolution of adaptive attacks is intrinsically linked to advancements in ML and AI, creating a perpetual arms race between attackers and defenders. The future may witness the emergence of adaptive attacks that are highly sophisticated, leveraging cutting-edge AI techniques to mimic normal network behaviors more convincingly or to discover and exploit novel vulnerabilities with heightened efficiency. These advancements could potentially allow adaptive attacks to become more autonomous, adaptive, and capable of self-learning, posing severe and dynamically evolving challenges for intrusion detection systems.

Future Defense Mechanisms

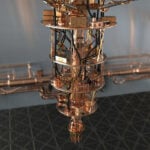

In response to the escalating threats, future defense mechanisms are likely to leverage advanced AI techniques to strengthen intrusion detection systems against adaptive attacks more effectively. These may include the development of AI models that are inherently robust against adversarial manipulations [6], employ real-time learning to adapt to new threats promptly, and use enhanced prediction and classification methodologies to detect anomalies with heightened accuracy. The integration of emerging technologies like quantum computing and blockchain could further bolster security by offering enhanced encryption and ensuring data integrity, respectively.

Conclusion

As the convergence of adaptive attacks and machine learning-based Intrusion Detection Systems (IDS) defines a new battleground in cybersecurity, the constant evolution of evasion techniques requires innovative defensive strategies.

References

- Mądry, A., Makelov, A., Schmidt, L., Tsipras, D., & Vladu, A. (2017). Towards deep learning models resistant to adversarial attacks. stat, 1050, 9.

- Papernot, N., McDaniel, P., Wu, X., Jha, S., & Swami, A. (2016). Distillation as a Defense to Adversarial Perturbations against Deep Neural Networks. IEEE Symposium on Security and Privacy (SP).

- Huang, L., Gao, C., & Liu, N. (2023). Erosion Attack: Harnessing Corruption To Improve Adversarial Examples. IEEE Transactions on Image Processing.

- Kurakin, A., Goodfellow, I., & Bengio, S. (2017). Adversarial Attacks and Defenses in Images, Speech and Text. arXiv preprint arXiv:1702.05983.

- Carlini, N., & Wagner, D. (2017). Towards Evaluating the Robustness of Neural Networks. IEEE Symposium on Security and Privacy (SP).

- Sabour, S., Cao, Y., Faghri, F., & Fleet, D. J. (2015). Adversarial manipulation of deep representations. arXiv preprint arXiv:1511.05122.

For 30+ years, I've been committed to protecting people, businesses, and the environment from the physical harm caused by cyber-kinetic threats, blending cybersecurity strategies and resilience and safety measures. Lately, my worries have grown due to the rapid, complex advancements in Artificial Intelligence (AI). Having observed AI's progression for two decades and penned a book on its future, I see it as a unique and escalating threat, especially when applied to military systems, disinformation, or integrated into critical infrastructure like 5G networks or smart grids. More about me, and about Defence.AI.