Defence.AI – Securing the Future

I find Artificial Intelligence (AI) to be awe-inspiring. In my opinion, it is the most transformative technology in human history. Its applications span across industries, automating tasks, enhancing decision-making, driving innovations, and acting as the linchpin that amplifies the benefits of other emerging technologies. AI has the potential to give us new medicines, eliminate poverty, offer solutions to pressing challenges like water scarcity and climate change, and make the world more peaceful.

However, AI’s vast potential is accompanied by a myriad of challenges and risks. The technology could be weaponized to create and disseminate disinformation on an unprecedented scale, orchestrate cyber-kinetic attacks with the potential to harm or even kill, and disrupt the global job market, leaving millions unemployed. Some even argue that unchecked advancements in AI could spell the end of humanity as we know it.

My commitment to understanding and addressing these risks has been unwavering. For almost three decades I’ve been raising awareness of cyber-kinetic risks. And, for close to two decades, since recognizing the connection between AI and cyber-kinetic risks (via AI-driven automation of cyber-physical systems), I have been researching AI risks in general and have been vocal about potential pitfalls of AI, sharing my insights through various platforms. This website serves as a consolidated space for my writings and thoughts on what I deem to be among the most pressing global challenges. I will focus on AI security challenges, especially concerning their ramifications in the defence sector.

AI Security

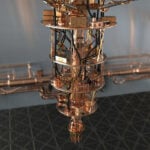

AI security is not just about protecting the systems from external threats; it’s about understanding the inherent vulnerabilities of AI. These vulnerabilities arise from the very nature of AI. For instance, AI models, trained on vast datasets, can be compromised if the data they’re trained on is tampered with, a phenomenon known as data poisoning. Similarly, the complexity of AI models can sometimes be exploited by attackers to reconstruct the data they were trained on or to make them produce incorrect outputs through adversarial attacks.

Addressing these challenges requires a comprehensive understanding of AI, its potential threats, as well as potential impacts if AI is exploited. It’s about ensuring robust training of models, continuously monitoring their operations, and striving for transparency in how they make decisions.

This is where I hope Defence.AI will play a small role. As a platform, Defence.AI curates my writings on AI security, serving as a repository of my articles. I envision it as a springboard for those keen on delving deeper into the subject, offering an introduction to AI security’s intricacies. It aims to shed light on the nuances of AI security, making the introductory information accessible.

Transitioning from the broader landscape of AI security, there’s one sector where the stakes for both, cyber-kinetic attacks and AI attacks are exceptionally high: defence.

AI use in Defence

There is no place that is ground zero for AI risks analysis more than the AI use in defence applications. The integration of AI into defence systems brings forth a unique set of challenges. AI has the potential to become the key to safeguarding our safety, freedom, and democracy, provided it is developed with responsibility, safety, security, and controllability at its core. The potential of AI to revolutionize military capabilities is enormous, promising efficiency, precision, and advanced decision-making capabilities that could fundamentally alter the way nations defend themselves and maintain peace.

But this promising frontier is not without its perils. As with all powerful technologies, the unchecked, irresponsible use of AI in military could potentially lead to devastating outcomes. Its application in the military could present particularly significant dangers due to the inherent nature of warfare and national defence. Military AI systems are designed to make rapid decisions, often with life or death implications. As these systems become more autonomous, there is a risk that they could act in unintended ways or make decisions that humans would deem unethical or inhumane. For example, autonomous weapons, or “killer robots”, could potentially be programmed to conduct attacks without human intervention, which raises concerns about accountability and the potential for catastrophic errors or misuse. Additionally, as countries race to gain an AI advantage in their military capabilities, it may lead to an AI arms race, increasing the probability of conflict and tension globally. The cybersecurity aspect also poses significant threats as AI military systems and their auxiliary systems could become targets for hacking, which could result in unauthorized actions with severe consequences. This is particularly concerning considering that many AI security challenges remain open.

This paradox is precisely one of the reasons why I created Defence.AI.

Defence.AI Purpose

Besides collecting my writings on AI security, I started collecting information at Defence.AI to illuminate the ongoing integration of AI into global military systems. My hope is to act as the impartial observer and analyzer of AI in defence, providing updates, analyses, and reports on the latest developments contributing to an informed and balanced discussion on AI’s place on the global battlefield.

My primary objective is to raise awareness of the issue so that we can amplify the voices asking for the ethical AI use in weapons systems. AI’s role in military systems often seems shrouded in secrecy, a dense fog of technological jargon, and complex strategies that can make it difficult for even experts to fully comprehend. I’d love to help cut through this fog, offering clear, accessible, and insightful information on the benefits, pitfalls, and ethical considerations of this critical area. With AI’s potential to autonomously make decisions that could result in life or death outcomes, it’s more important than ever to ensure this technology is developed and deployed responsibly.

My Position

Given my specific area of interest – the risks AI presents within cyber-physical systems, which have the potential to endanger lives, well-being, or the environment when misused – my efforts are dedicated to championing the responsible, secure, and managed application of AI. This commitment extends to ensuring transparent explainability of the behaviour of these systems.

The challenge I grapple with, and one I extend to my readers, is to continue exploring approaches that support the AI-driven evolution of cyber-physical systems. This encompasses integration of AI into vital infrastructures like 5G and smart grids, into autonomous transportation, and most impactfully, the inexorable development of autonomous weapons systems. All while still having effective and reliable ethical and legal control mechanisms. Such complexities raise the question of the trustworthiness of AI systems and the means by which the compliance and predictability of AI systems could be assured under all circumstances.

My personal position is that governments, influenced by public sentiment, should deter the development of autonomous systems that directly target humans. However, I fear that, in this regard, the genie is already out of the bottle.

Finally, due to various misunderstandings of the purpose of this website, I am obliged to include this disclaimer:

This website is strictly apolitical and does not align with or endorse any particular country or political entity. All information presented here is based solely on publicly available data. I do not possess, nor do I seek access to, any non-public or confidential information. Visitors are urged to use the content responsibly and verify any information from independent sources before drawing conclusions or making decisions.

For 30+ years, I've been committed to protecting people, businesses, and the environment from the physical harm caused by cyber-kinetic threats, blending cybersecurity strategies and resilience and safety measures. Lately, my worries have grown due to the rapid, complex advancements in Artificial Intelligence (AI). Having observed AI's progression for two decades and penned a book on its future, I see it as a unique and escalating threat, especially when applied to military systems, disinformation, or integrated into critical infrastructure like 5G networks or smart grids. More about me, and about Defence.AI.