Artificial Intelligence (AI) in Defence

Table of Contents

Artificial Intelligence (AI) is a force multiplier reshaping the future of war. Every nation has by now realized that sustaining advanced military capabilities and the national security are now inextricably tied to the weaponization of AI and strategic deployment of AI-enabled capabilities.

AI is not a single weapon. Unlike for example nuclear warheads that have a singular application, AI is changing every aspect of military. A growing number of robotic vehicles and autonomous weapons are being deployed to combat zones and for surveillance. The massive volume of information now being collected by all kinds of AI-enabled unmanned systems can only be digested and anlysed with AI and that information now supports all strategic decision making, target recognition, threat monitoring, etc. AI-enabled defensive systems are increasingly able to detect, analyze, and respond to attacks faster and more effectively than any human team could have in the past. AI is in the middle of combat simulations. Drone swarms is the latest technology being explored for autonomous lethal actions. Transportation and casualty evacuation and increasingly using autonomous vehicles. And so on. Here are just a few examples:

AI in Defence: Current Applications

Autonomous Systems

One of the most prominent uses of AI in defence is in the development of autonomous systems. These systems can operate independently, without human intervention, and can be used in a variety of applications, from surveillance drones to autonomous vehicles.

For instance, the U.S. Department of Defence (DoD) has been exploring the use of autonomous drones for surveillance and reconnaissance missions. These drones, equipped with AI-powered algorithms, can navigate complex environments, identify targets, and relay information back to base, all without putting human lives at risk. This not only enhances the efficiency of the operations but also minimizes the potential for human error.

One of the most prominent applications of AI in defense today is in the development and deployment of autonomous vehicles. These vehicles, which include unmanned aerial vehicles (UAVs), unmanned ground vehicles (UGVs), and unmanned underwater vehicles (UUVs), are capable of performing tasks without human intervention.

For instance, the U.S. military uses UAVs, also known as drones, for surveillance, reconnaissance, and targeted strikes. These drones can fly over hostile territories, gather intelligence, and even launch attacks, all while minimizing the risk to human soldiers. An example of this is the MQ-9 Reaper drone, which is capable of performing surveillance, reconnaissance, close air support, and targeted strikes.

Another example is the use of AI in unmanned underwater vehicles (UUVs). These UUVs are used for a variety of purposes, including detecting mines, mapping the ocean floor, and collecting data on water conditions. The use of AI allows these UUVs to navigate the complex underwater environment, identify objects of interest, and make decisions based on the data they collect.

Predictive Maintenance

AI is also being used to predict when military equipment will need maintenance. By analyzing data from sensors on equipment, AI can identify patterns and predict when a piece of equipment is likely to fail. This allows for proactive maintenance, reducing downtime and increasing the lifespan of the equipment.

For example, the U.S. Navy has been using AI to predict when aircraft will need maintenance. By analyzing data from sensors on the aircraft, the AI can identify patterns that indicate a potential problem. This allows the Navy to perform maintenance before the problem becomes serious, reducing downtime and increasing the lifespan of the aircraft.

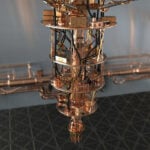

This allows for proactive maintenance, reducing downtime and increasing operational efficiency. The U.S. Department of Defense’s Joint Artificial Intelligence Center (JAIC) has been working on predictive maintenance projects for the U.S. military’s vehicle fleet. A research paper titled “KSPMI: A Knowledge-based System for Predictive Maintenance in Industry 4.0” provides an example of how AI can be used in predictive maintenance. The paper presents a novel Knowledge-based System for Predictive Maintenance in Industry 4.0 (KSPMI), which is developed based on a hybrid approach that leverages both statistical and symbolic AI technologies. The system uses machine learning and chronicle mining to extract machine degradation models from industrial data. Then, symbolic AI technologies, especially domain ontologies and logic rules, use the extracted chronicle patterns to query and reason on system input data with rich domain and contextual knowledge. This approach enables the automatic detection of machinery anomalies and the prediction of future events’ occurrence, improving the reliability, availability, and efficiency of manufacturing systems. Although this example is from the manufacturing industry, the same principles can be applied to military equipment maintenance.

Logistics

AI is also being used to improve logistics in defence. Predictive analytics, powered by AI, can help in forecasting the demand for various resources, optimizing supply chain operations, and reducing wastage.

Cybersecurity

AI plays a crucial role in cybersecurity in the defence sector. AI algorithms can detect unusual network activity, identify potential threats, and respond to cyber-attacks more quickly and efficiently than human operators. This is particularly important in the defence sector, where cyber-attacks can have severe national security implications.

For instance, the Defense Advanced Research Projects Agency (DARPA) in the U.S. has been developing AI systems that can automatically detect and respond to cyber threats. These systems can identify unusual network activity, analyze it to determine whether it is a threat, and then take appropriate action, such as blocking the activity or alerting a human operator. DARPA has also initiated the Cyber Grand Challenge, which aims to develop AI systems that can autonomously evaluate software, identify vulnerabilities, and apply patches.

AI in Defence: Future Possibilities

AI-Enabled Warfare

As AI technology continues to advance, it is likely to play an increasingly significant role in warfare. AI could be used to coordinate and execute complex military operations, making them more efficient and reducing the risk to human soldiers.

For instance, AI could be used to coordinate a swarm of drones, each carrying out a specific task as part of a larger mission. This could include surveillance drones identifying targets, combat drones attacking the targets, and support drones providing logistical support. This would allow for a highly coordinated and efficient operation, with each drone playing a specific role.

Another potential application is in the development of autonomous weapons systems. These systems, often referred to as “killer robots”, would be able to identify and engage targets without human intervention. While this raises significant ethical and legal issues, it is a potential future application of AI in warfare.

The concept of autonomous weapons systems, or “killer robots,” is a contentious one, with significant ethical and legal implications. A study titled “Meaningful Human Control over Autonomous Systems: A Philosophical Account” by Santoni de Sio and Jeroen van den Hoven delves into this topic. They propose two conditions for meaningful human control over autonomous systems: tracking and tracing. The tracking condition requires that an autonomous system should be able to track the relevant human (moral) reasons (in a sufficient number of occasions). The tracing condition requires that the behavior of an autonomous system is traceable to a proper moral understanding on the part of humans who design and deploy the system.

This study suggests that autonomous weapons systems can be designed to maintain meaningful human control, even as they operate independently. This involves designing the system to track the relevant moral reasons, as well as ensuring that the system’s behavior can be traced back to a proper moral understanding on the part of the humans who designed and deployed it. This approach could potentially address some of the ethical and legal concerns associated with autonomous weapons systems.

AI-Enhanced Soldiers

AI could be used to enhance the capabilities of soldiers. This could include exoskeletons that use AI to enhance strength and endurance, or helmets that use AI to provide information about the battlefield.

AI in Space Defence

The future of defence also extends beyond our planet. As nations explore space, the need for AI in space defense becomes increasingly apparent. AI could be used to track objects in space, predict potential collisions, and even coordinate defensive measures against potential threats, such as asteroids or hostile satellites.

For instance, NASA has been using AI to track asteroids and predict their trajectories. This allows them to identify potential threats and plan defensive measures, such as launching a mission to deflect the asteroid. Similarly, the U.S. Space Force could potentially use AI to track satellites and other objects in space, identify potential threats, and coordinate defensive measures.

AI and Disinformation/Misinformation

AI plays a significant role in both the propagation and the combating of disinformation and misinformation. On one hand, AI can be used to create convincing fake news and deepfakes, contributing to the spread of disinformation. On the other hand, AI can also be used to detect and combat disinformation, by identifying fake news and deepfakes, and alerting users to their presence.

AI in Propagating Disinformation

AI technologies, such as deep learning, have been used to create convincing fake news and deepfakes. Deepfakes are fake videos that are created using AI, where the face of a person in an existing video is replaced with the face of another person. These deepfakes can be incredibly convincing, making it difficult for viewers to distinguish between real and fake videos.

Similarly, AI can be used to generate fake news articles that are convincing and difficult to distinguish from real news. This can contribute to the spread of disinformation, as people may be misled by the fake news and form incorrect beliefs or make decisions based on false information.

AI in Combating Disinformation

On the flip side, AI can also be used to combat disinformation. AI algorithms can be trained to detect fake news and deepfakes, by analyzing the content and identifying patterns that are indicative of fakes. For instance, AI can analyze the language used in a news article to determine whether it is likely to be fake, or analyze a video to determine whether it is a deepfake.

Conclusion

AI is already playing a significant role in defence, and its role is likely to grow in the future. As this happens, it will be important to ensure that the use of AI in defence is guided by ethical principles and legal standards.

For 30+ years, I've been committed to protecting people, businesses, and the environment from the physical harm caused by cyber-kinetic threats, blending cybersecurity strategies and resilience and safety measures. Lately, my worries have grown due to the rapid, complex advancements in Artificial Intelligence (AI). Having observed AI's progression for two decades and penned a book on its future, I see it as a unique and escalating threat, especially when applied to military systems, disinformation, or integrated into critical infrastructure like 5G networks or smart grids. More about me, and about Defence.AI.