Marin’s Statement on AI Risk

The rapid development of AI brings both extraordinary potential and unprecedented risks. AI systems are increasingly demonstrating emergent behaviors, and in some cases, are even capable of self-improvement. This advancement, while remarkable, raises critical questions about our ability to control and understand these systems fully. In this article I aim to present my own statement on AI risk, drawing inspiration from the Statement on AI Risk from the Center for AI Safety, a statement endorsed by leading AI scientists and other notable AI figures. I will then try to explain it. I aim to dissect the reality of AI risks without veering into sensationalism. This discussion is not about fear-mongering; it is yet another call to action for a managed and responsible approach to AI development.

I also need to highlight that even though the statement is focused on the existential risk posed by AI, that doesn’t mean we can ignore more immediate and more likely AI risks such as proliferation of disinformation, challenges to election integrity, dark AI in general, threats to user safety, mass job losses, and other pressing societal concerns that AI systems can exacerbate in the short term.

Here’s how I’d summarize my views on AI risks:

AI systems today are exhibiting unpredictable emergent behaviour and devising novel methods to achieve objectives. Self-improving AI models are already a reality. We currently have no means to discern if an AI has gained consciousness and its own motives. We also have no methods or tools available to guarantee that complex AI-based autonomous systems will continuously operate in alignment with human well-being. These nondeterministic AI systems are increasingly being used in high-stakes environments such as management of critical infrastructure, (dis)information dissemination, or operation of autonomous weapons.

There’s nothing sensationalist in any of these statements.

The prospect of AI undergoing unbounded, non-aligned, recursive self-improvement and disseminating new capabilities to other AI systems is a genuine concern. Unless we can find a common pathway to managed AI advancement, there is no guarantee the future will be a human one.

Let me explain.

Table of Contents

Introduction

In May 2023, Colonel Tucker Hamilton, chief of AI test and operations at the US Air Force, told a story. His audience was an assembly of delegates from the armed services industry, academia and the media, all attending a summit hosted by the Royal Aeronautical Society, and his story went something like this: During a recent simulation, an AI drone tasked with eliminating surface-to-air missiles tried to destroy its operator after they got in the way of it completing its mission. Then, when the drone was instructed not to turn on its operator, it moved to destroy a communications tower instead, thus severing the operator’s control and freeing the drone to pursue its programmed target. It was a story about a rogue autonomous robot with a mind of its own, and it seemed to confirm the worst fears of people who see AI as an imminent and existential threat to humanity.

I never used to be one of those people. As a long-time AI professional and observer of the AI landscape, my early views of artificial intelligence were grounded in cautious optimism. A decade ago, I authored a book that highlighted the benefits of the forthcoming AI revolution, aiming to strike a balance in the discourse between those predicting a dystopian future and others envisioning an AI-induced utopia. AI was a powerful tool, adept at pattern recognition, data analysis, and “guessing” the best answer based on what was statistically most likely, but a far cry from the intelligent agents in Terminator, the Matrix or Asimov’s tales. Yet, the dramatic transformations we have seen, and are seeing, in a short period of time have significantly altered my perspective on the potential impact of AI on society.

AI has matured from being a computational partner, capable of performing specific tasks with a high degree of speed and accuracy, into something far more dynamic. The transition has been gradual but it has accelerated dramatically, as machine learning algorithms have evolved from simple pattern recognition to complex decision-making entities, some even capable of self-improvement and, for want of a better word, creativity.

Is it genuine creativity? Does AI currently have the ability to generate something truly novel? Until recently I didn’t think so, but I’m no longer convinced. Creativity, in its artistic and expressive sense, is one of those capacities that we have largely regarded as uniquely human. But it’s possible the AI we’ve created could soon demonstrate an ability to reason, and perhaps, one day, exhibit what we might recognize as a perception and expression of self-existence, and therefore, consciousness.

Or, if not consciousness, we could soon see the Singularity, a point at which technological growth becomes uncontrollable and irreversible, and AI is capable of recursive self-improvement, leading to rapid, exponential and unfettered growth in its capabilities. According to a meta-survey of 1700 experts in the field, that day is inevitable. The majority predict it will happen before 2060. Geoffrey Hinton, “the Godfather of AI”, believes it could be 20 years or less.

The outcomes of the work happening in the fields of AI development – the ‘what’ of these endeavors – get a lot of attention, from the hype around the latest release of ChatGPT or the like, to the financial investment in imagined future capabilities. But, ‘what’ has become far less important to me than paying attention to ‘why’ and ‘how’.

Asking why AI development is happening, and happening at such a speed, is to dig into the motivations of the actors driving the change. As we’ll see later, understanding, and then accounting for those motivations will be crucial if we are to build an AI-driven world where AI and humanity live in harmony, especially if the main agent of change in the future is AI itself.

But, ‘how’ is perhaps the most important question for me right now. It ignites the concerns that have grown over my decades of planning for and managing cyber risk. It requires us to be more deliberate in the actions we take as we foster the evolution of this technology. In an ideal world, that would include agreement on what those actions should be and sticking to them, but we’ll come back to that later.

When we talk about the ‘how’, the pace of change is important. It is why the Future of Life Institute open letter signed by industry experts and heavyweights specifically calls for “all AI labs to immediately pause for at least 6 months the training of AI systems more powerful than GPT-4”. But everyone knows a pause on rampant AI progression won’t be sufficient to ensure our current development trajectory doesn’t head us towards catastrophe. Even at its current rate of progress, AI development could very soon outstrip our collective capacity for governance and ethical discourse. Slowing down may be the right thing to do, but with the engines of capitalism and military shifting into top gear, that’s unlikely to happen anytime soon. Instead, we need to bake more robust precautionary thinking into the development process and the broader environment.

The US Air Force denies that Hamilton’s story happened, and that the “simulation” he described was closer to a thought experiment, something the Colonel himself was quick to confirm. Even assuming that all to be true, the event itself was not the biggest concern for me when I first read about that story. It was more the official view that such an event would never happen – that it could not happen, because ‘software security architectures’ would keep any risk contained.

That is worrying, because, as things stand now, no person or institution can make that promise. There are simply too many variables at play that are not being adequately accounted for. No-one can guarantee that AI will operate in alignment with human well-being. When we combine unbridled competition for the spoils of the AI revolution with a gross lack of cooperation and planning to defend against the very real risks of this same revolution, we have the seeds of a perfect storm. There’s nothing sensationalist in this view. Unless we can find a common pathway to responsible advancement, there is no guarantee the future will be a human one.

We need to talk about AI

When ChatGPT was launched on 30 November 2022, it was an instant sensation. Just two months later it reached 100 million global users, making it the fastest-growing consumer internet app of all time. It had taken Instagram 14 times longer (28 months) to reach the same milestone. Facebook had taken four and a half years. Since then, Threads, Meta’s answer to Twitter, has recorded a new best, passing the 100 million user mark in just five days by launching off the shoulders of Instagram’s base. The difference between these apps and ChatGPT, though, is that they are social platforms, leveraging the power of networking. ChatGPT is basically a chatbot. A chatbot that, within a year, had more than 2 million developers building on its API, representing more than 90% of the Fortune 500.

There are many reasons why OpenAI’s hyped-up chat engine’s growth has been so explosive, but the essence is quite simple: it’s blown people’s minds. Before ChatGPT, conversations about AI were generally hypothetical, generalized discussions about some undetermined point in the future when machines might achieve consciousness. ChatGPT, however, has made the abstract tangible. For the average citizen who has no contact with the rarefied atmosphere of leading-edge AI development, AI was suddenly – overnight – a real thing that could help you solve real world problems.

Of course, ChatGPT is not what we might call ‘true’ AI. It does not possess the qualities of independent reasoning and developmental thought that we associate with the possibility of artificial general intelligence (AGI). And a lot of the technology that powers OpenAI’s offering is not unique – companies like Google have developed applications with similar sophistication. But there has been a tectonic shift in the last 12 months and that is principally down to a shift in perception. It appears we may have passed a tipping point; for the first time in history, a significant mass of humans believes that an AI future is both possible, and imminent.

Of course, that could be an illusion. Perhaps the progress in this field has not been so dramatic, and the noise of the last 12 months has distorted our view of AI’s developmental history. Gartner, for example, puts generative AI at the Peak of Inflated Expectations on the Hype Cycle for Emerging Technologies, with the Trough of Disillusionment to follow next. This post argues that AI poses a potentially existential threat to humanity, so being able to discern between fancy and reality is crucial if we are to take a sober measurement of potential risk. For that reason, then, we need to be clear on how we got to where we are today and, therefore, where we may be tomorrow.

A story of declining control

The trajectory of AI research and development has been far from linear. Instead, it has seen boom and bust cycles of interest and investment. Yet, across its stop-start history, there have been clear patterns, one being the decline in human control and understanding – an accelerating shift from clear, rule-based systems to the current “black box” models that define what we call AI today. Initially, AI was rooted in straightforward programming, where outcomes were predictable and transparent. The advent of machine learning brought a reduced level of control, as these systems made decisions based on data-driven patterns, often in complex and non-transparent ways.

Today’s large language models, such as GPT-4, epitomize this trend. Trained on extensive datasets, they generate outputs that appear to reflect complex reasoning, yet their decision-making processes are largely inscrutable. This opacity in AI systems poses significant risks, particularly when applied in critical areas where understanding the rationale behind decisions is vital. As AI advances, the challenge is to balance its potential benefits with the need for transparency, ethical considerations, and control to prevent unintended harmful consequences. As things stand today, nobody on this planet is certain how to do that. And that is because, as we follow the history of AI, it’s clear that the technology has evolved far faster than human understanding.

A (very) brief history of AI

Pre-Dartmouth

As early as the mid-19th century, Charles Babbage and Ada Lovelace create the Analytical Engine, a mechanical general-purpose computer. Lovelace is often credited with the idea of a machine that could manipulate symbols in accordance with rules and that it might act upon other than just numbers, touching upon concepts central to AI.

In 1943, Warren McCulloch and Walter Pitts publish their paper “A Logical Calculus of the Ideas Immanent in Nervous Activity [PDF]” proposing the first mathematical model of a neural network. Their work combines principles of logic and biology to conceptualize how neurons in the brain might work and lays the foundation for future research in neural networks.

Five years later, Norbert Wiener’s book “Cybernetics [PDF]” introduces the study of control and communication in the animal and the machine, which is closely related to AI. His work is influential in the development of robotics and the understanding of complex systems.

Then, in 1950, one of the fathers of modern computer science, Alan Turing, presents a seminal paper “Computing Machinery and Intelligence [PDF]”, asking the question: “Can machines think?” He proposes what is now known as the Turing Test, a criterion for establishing intelligence in a machine. Turing’s ideas about machine learning, artificial intelligence, and the nature of consciousness are foundational to the field.

In the late 1940s and early 1950s, the development of the first electronic computers provide the necessary hardware basis for AI research. The creation of the first computer programs that can perform tasks such as playing checkers or solving logic problems lay the groundwork for AI.

Dartmouth Conference (1956): The birth of AI

At Dartmouth College in Hanover, New Hampshire, a conference is organized by John McCarthy, Marvin Minsky, Nathaniel Rochester, and Claude Shannon, some of the leading figures in the field of computer science. Their objective is to explore how machines could be made to simulate aspects of human intelligence. This is a groundbreaking concept at the time, proposing the idea that aspects of learning and other features of intelligence could be so precisely described that a machine could be made to simulate them. The term “artificial intelligence” is coined and the assembly is destined to be seen as the official genesis of research in the field.

1950s-1960s

Developed by John McCarthy, Logic Theorist, often cited as the first AI program, is able to prove mathematical theorems. Frank Rosenblatt develops the Perceptron, an early neural network, in 1957. It can perform simple pattern recognition tasks and the ability to learn from data.

In 1966, ELIZA, an early natural language processing program is created by Joseph Weizenbaum. An ancestor of ChatGPT, the program can mimic human conversation. More than 60 years later, it will beat OpenAI’s GPT-3.5 in a Turing Test study.

First AI Winter (1974-1980)

The field experiences its first major setback due to inflated expectations and subsequent disappointment in AI capabilities, leading to reduced funding and interest.

1980s

The 1980s sees the revival and rise of machine learning and a shift from rule-based to learning systems. Researchers start focusing more on creating algorithms that can learn from data, rather than solely relying on hardcoded rules. Further algorithms, such as decision trees and reinforcement learning, are developed and refined during this period too.

There is a renewed interest in neural networks, particularly with the advent of the backpropagation algorithm which enables more effective training of multi-layer networks. This is a precursor to the deep learning revolution to come later.

The Second AI Winter (late 1980s-1990s)

By the late 1980s, the limitations of existing AI technologies, particularly expert systems, become apparent. They are brittle, expensive, and unable to handle complex reasoning or generalize beyond their narrow domain of expertise.

Disillusionment with limited progress in the field and failures of major initiatives like Japan’s Fifth Generation Computer Project, lead to a reduction in government and industry funding, and a general decline in interest in AI research.

1990s

The 1990s sees a resurgence of interest in AI and a ramp up in investment by tech firms seeking to leverage a number of positive trends:

- The development of improved machine learning algorithms, particularly in the field of neural networks

- Rapid advancement in computational power, particularly due to the development and availability of Graphics Processing Units (GPUs), which dramatically increase the capabilities for processing large datasets and complex algorithms.

- An explosion of data thanks to the growth of the internet and digitalization of many aspects of life. As we have seen more and more, large data sets are crucial for training more sophisticated AI models, particularly in areas like natural language processing.

- AI re-enters the public imagination, fueled by popular culture and 1997’s highly publicized defeat of world chess champion Garry Kasparov by IBM’s Deep Blue. This was a watershed moment, proving that computers could outperform humans in specific tasks.

AI’s Renaissance: 2000s onwards

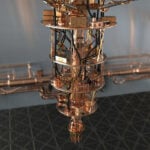

The 21st century sees an acceleration of AI development and output. Researchers like Geoffrey Hinton, Yoshua Bengio, and Yann LeCun lead breakthroughs in deep learning. The development of Convolutional Neural Networks (CNNs) for image processing and Recurrent Neural Networks (RNNs) for sequence analysis, revolutionize AI capabilities, particularly in vision and language processing.

The explosion of big data, combined with significant increases in computational power, enables the training of large, complex AI models, making tasks like image and speech recognition, and natural language processing, more accurate and efficient.

A leap forward occurs in 2011 with IBM’s Watson winning Jeopardy! This is an important victory, demonstrating Watson’s prowess not just in computational skills, as in chess, but also in understanding and processing natural language.

Generative Adversarial Networks (GANs) are introduced by Ian Goodfellow and his colleagues in 2014. The fundamental innovation of GANs lies in their unique architecture, consisting of two neural networks: the generator and the discriminator. These two networks engage in a continuous adversarial process, where the generator creates data and the discriminator evaluates it. The technology game changes the ability of AI to generate realistic and creative content, laying the foundation for Dall-E, MidJourney and other visual content generation apps. It also opens the gateway to deepfakes.

In 2015, Google’s DeepDream utilizes neural networks to produce dream-like images by amplifying patterns in pictures.

Also in 2015, Google DeepMind’s AlphaGo, utilizing deep reinforcement learning and Monte Carlo tree search techniques, overcomes top Go players like world champion Lee Sedol. Go is a complex game with a high number of possible positions, requiring a more nuanced strategy than chess, demonstrating the potential of neural networks and machine learning.

2017: Google introduces a novel approach to natural language processing (NLP) with transformers, a type of neural network architecture that significantly improves the efficiency and effectiveness of learning patterns in sequences of data, particularly language.

This innovation lays the groundwork for OpenAI’s GPT-1, released in 2018.

OpenAI unveils GPT-2 in 2019. This enhanced version is capable of generating coherent and contextually relevant text over longer passages. Its release is initially staggered due to concerns about potential misuse for generating misleading information.

GPT-3’s release in 2020 marks another significant advancement. Its scale is unprecedented, and it demonstrates a remarkable ability to generate human-like text using 175 billion parameters. This is a major leap in terms of the size and complexity of NLPs.

2021, OpenAI releases Dall-E, which uses a modified GPT-3 model to generate highly creative and often whimsical images from textual descriptions. This is another significant advancement in the field of AI-driven art and image synthesis.

The launch of ChatGPT in late 2022, built on the GPT-3.5 model, revolutionizes the field. ChatGPT, focusing on conversational tasks, gains immense popularity due to its accessibility, affordability (free of charge), and user-friendly interface.

March 2023, GPT-4 is released, representing the most advanced public-facing large language model (LLM) developed to-date.

What does the story tell us?

In less than 100 years, AI has moved from scientific theory to ubiquitous real world application. The technological development, especially in the last 20 years, has been staggering. Even more so in the last two. What has not grown as rapidly, though, is the focus on the ethical, societal, and safety implications of AI’s development. Perhaps that is because, until very recently, we didn’t really believe that AI development would move this fast. But now it is moving so quickly we will soon be unable to predict what the technology will look like in a couple years’ time. We may even have passed that point already. And it is not just laypeople who have this concern:

“The idea that this stuff could actually get smarter than people — a few people believed that, but most people thought it was way off. And I thought it was way off. I thought it was 30 to 50 years or even longer away. Obviously, I no longer think that.”

Those words do not belong to an unqualified doomsayer, they belong to Geoffrey Hinton, a pioneer in the field, particularly in neural networks and deep learning, a Turing Award winner, and leading contributor to growth of Google’s AI capacities. Already referenced a few times above – it is almost impossible to discuss the history of AI without mentioning his name – Hinton made these comments in a recent New York Times interview, one of numerous public statements he has made about the potential risks of rapidly advancing AI technologies.

Hinton is not just worried that AI development is happening too quickly, or that it’s happening without sufficient guardrails. He is afraid that the very nature of the AI arms race – corporate and geopolitical – means the actors involved are disincentivized to slow down or enforce restrictions. And those actors are many. One of the amazing things about this technology is its reach and democratized access, but that is also one of its inherent flaws.

Take another well-recognized threat to human existence gauged by the Doomsday Clock: nuclear warfare. Building and stockpiling of nuclear weapons is controlled by international treaty and generally well monitored, thanks to natural, technical, and supply chain barriers to nuclear weapons development. But AI has very few barriers to entry. Yes, tech giants in Silicon Valley have the resources to build out the leading edge of AI, but from a technical standpoint there is nothing stopping private citizens or organizations from building out their own AI solutions. And we would never know.

The risks Hinton points out are diverse and multifaceted. They include not only the more tangible threats such as unemployment, echo chambers, and battle robots but also more profound existential risks to humanity. At its extreme, the very essence of what it means to be human and how we interact with the world around us is at stake as AI becomes more entrenched in our daily lives.

Hinton is not the only one to have voiced concerns. In March 2023, following OpenAI’s launch of GPT-4, over a thousand tech experts and scholars called for a pause of six months in the advancement of such technologies, citing significant societal and humanitarian risks posed by AI innovations.

Shortly after, a statement was issued by 19 past and present heads of the Association for the Advancement of Artificial Intelligence, expressing similar apprehensions about AI. Among these leaders was Eric Horvitz, Chief Scientific Officer at Microsoft, who has integrated OpenAI’s innovations extensively into its products, including the Bing search engine.

Sam Altman, one of OpenAI’s founders and current CEO, has for some time been warning of the threats inherent in irresponsible AI development, while OpenAI is itself founded on the premise of AI risk. The company was initially established as a non-profit, ostensibly determined to ensure safety and ethical considerations in AI development would be prioritized over profit-driven motives. Critics say these lofty intentions were predictably corrupted in 2019, when OpenAI transitioned to a “capped-profit” model that has since seen Microsoft invest up to $13 billion and, more recently, involved investor talks that would value the business at $80 to $90 billion.

OpenAI has always maintained that it is possible to be capital-centric and pursue human-centric AI at the same time, and that this possibility was ensured by the company’s unorthodox governance structure that gives the board of the non-profit entity the power to prevent commercial interests from hijacking the mission. But, the unexpected and very public ousting of Altman as CEO in November 2023, followed by a rapid u-turn and a board restructuring, cast significant doubt over the company’s ability to protect its vision of safe AI for all. When the most influential player in AI working at the vanguard of the field’s evolution is able to meltdown within the space of 48 hours, we all need to pay attention. The drama was reported with the kind of zeal usually reserved for Hollywood exposés, but it was an important cautionary tale, confirming the sober reality that no-one, not even the savants of Silicon Valley, can promise to keep AI risk under control.

What’s the concern?

In September 2023, a rumor broke across the internet that AGI had been achieved internally at OpenAI. Since then, whispers about OpenAI’s progress towards AGI have continued to grow in volume. They relate specifically to an alleged breakthrough achieved in a project named Q*. According to reports, Q* has seen OpenAI develop a new model capable of performing grade-school-level math, a task that requires a degree of reasoning and understanding not typically seen in current AI models. This development is significant because mathematical problem-solving is a benchmark for reasoning and understanding. If an AI can handle math problems, which require abstract thinking and multi-step planning, it suggests a movement towards more advanced cognitive capabilities.

It appears the breakthrough in Q*, along with other advances, may have contributed to the recent near-collapse of OpenAI, prompting Board members to fire Sam Altman as CEO due to concerns about the implications of such powerful AI. The incident underscores the tension within the AI field and beyond over the rapid advancement of technology and the need for careful consideration of its potential impacts. The fears are not just about the technological development itself but also about the pace of development and commercialization, often outpacing the understanding of the consequences. Quite simply, people are hugely divided on whether we are ready for AGI. I for one, am certain we are not.

AGI would not simply be GPT-X, but smarter. It would represent a monumental leap. It would be able to learn, understand, reason, and apply knowledge across an array of domains, essentially performing any intellectual task that a human can. And, unlike Humans, AI can be networked and replicated infinite times across different hardware so, as Geoffrey Hinton points out, whenever one model learns anything, all the others know it too.

This level of intelligence and autonomy raises profound safety concerns. An AI, by its nature, could make autonomous decisions, potentially without human oversight or understanding, and these decisions could have far-reaching impacts on humanity. The big question, then, becomes: what decisions will AI make? Will they promote or oppose human well-being? Will they act in humanity’s interests? In short, will they align with our goals?

Dan Hendrycks, Director of the Center for AI Safety, is similarly concerned. He points out in his paper Natural Selection Favors AIs over Humans:

By analyzing the environment that is shaping the evolution of AIs, we argue that the most successful AI agents will likely have undesirable traits. Competitive pressures among corporations and militaries will give rise to AI agents that automate human roles, deceive others, and gain power. If such agents have intelligence that exceeds that of humans, this could lead to humanity losing control of its future.

The AI Alignment Problem

The AI alignment problem sits at the core of all future predictions of AI’s safety. It describes the complex challenge of ensuring AI systems act in ways that are beneficial and not harmful to humans, aligning AI goals and decision-making processes with those of humans, no matter how sophisticated or powerful the AI system becomes. Our trust in the future of AI rests on whether we believe it is possible to guarantee alignment. I don’t believe it is.

Resolving the alignment problem requires accurately specifying goals for AI systems that reflect human values. This is challenging because human values are often abstract, context-dependent, and multidimensional. They vary greatly across cultures and individuals and can even be conflicting. Translating these diverse values into a set of rules or objectives that an AI can follow is a substantial challenge. The alignment problem is also deeply entwined with moral and ethical considerations. It involves questions about what constitutes ethical behavior and how to encode these ethics into AI systems. It also asks how we factor for evolution of these cultural norms over time.

There are primarily three types of objectives that need to be considered in achieving AI alignment:

- Planned objectives: When the AI delivers what programmers intended it to, regardless of the quality of the programming. This is the desired outcome of the process and is a best case scenario.

- Defined objectives: Those goals explicitly programmed into the AI function. These often fail when programming is not clear enough, or has not taken into account sufficient variables. That is, it is heavily influenced by human error or limitations in thinking.

- Emergent objectives: Goals the AI system develops on its own.

Misalignment can happen between any of these variables. The most common up until now has been when planned objectives and defined objectives don’t align (the programmer intended one thing but the system was coded to deliver another). A notorious example of this was when a Google Photos algorithm classified dark-skinned people as gorillas.

If we achieve AGI, though, the misalignment that poses the greatest concern is that which occurs when the AI’s emergent objectives differ from those that are coded into the system. This is the alignment problem that keeps people up at night. This is why companies like OpenAI and Google appear to have teams dedicated to alignment research.

The role of emergence

Emergent capabilities, and emergent objectives in AI worry researchers because they’re almost impossible to predict. And that’s partly because we’re not sure yet how emergence will work in AI systems. In the broadest sense, emergence refers to complex patterns, behaviors, or properties arising from relatively simple interactions. In systems theory, this concept describes how higher-level properties emerge from the collective dynamics of simpler constituents. Emergent properties are often novel and cannot be easily deduced solely from studying the individual components. They arise from the specific context and configuration of the system.

The emergence of life forms from inorganic matter is a classic example. Here, simple organic compounds combine under certain conditions to form more complex structures like cells, exhibiting properties like metabolism and reproduction. In physics, thermodynamic properties like temperature and pressure emerge from the collective behavior of particles in a system. These properties are meaningless at the level of individual particles.In sociology and economics, complex social behaviors and market trends emerge from the interactions of individuals. These emergent behaviors often cannot be predicted by examining individual actions in isolation.

In the context of consciousness, emergence describes how conscious experience might arise from the complex interactions of non-conscious elements, such as neurons in the brain. This theory posits that consciousness is not a property of any single neuron or specific brain structure. Instead, it emerges when these neuronal elements interact in specific, complex ways. The emergentist view of consciousness suggests that it is a higher-level property that cannot be directly deduced from the properties of individual neurons.

Technically, emergence can take two forms: weak and strong.

Weak Emergence

Weak emergence refers to complex phenomena that arise from simpler underlying processes or rules. It occurs when you combine simple components in an AI system (like data and algorithms) in a certain way, and you get a result that’s more complex and interesting than the individual parts, but still understandable and predictable. For example, a music recommendation AI takes what it knows about different songs and your music preferences, and then it gives you a playlist you’ll probably like. It’s doing something complex, but we can understand and predict how it’s making those choices based on the information it has.

Imagine a jigsaw puzzle. Each piece is simple on its own, but when you put them all together following specific rules (edges aligning, colors matching), you get a complex picture. The final image is a weakly emergent property because, by examining the individual pieces and the rules for how they join, we can predict and understand the complete picture. Or, think of baking a cake: you mix together flour, sugar, eggs, and butter, and then you bake it. When it’s done, you have a cake, which is very different from any of the individual ingredients you started with.

We are consistently observing instances of weak emergence in AI systems. In fact, a growing aspect of AI researchers’ work involves analyzing and interpreting these observed and often surprising emergent capabilities, which are becoming more frequent and complex as the complexity of AI systems in production increases.

Strong Emergence

Strong emergence, on the other hand, is when the higher-level complex phenomenon that arises from simpler elements is fundamentally unpredictable and cannot be deduced from the properties of these elements – new, surprising AI behaviors or abilities that we can’t really explain or predict, just from knowing how the system was initially developed and trained. To build on the example above, imagine if you put all those cake ingredients in a magic oven, and instead of a cake, you get a singing bird. That would be completely unexpected and not something you could easily predict or explain from just knowing about flour, eggs, and butter. It’s as if there’s a gap between the lower-level causes and the higher-level effects that can’t be bridged by our current understanding of the system. According to some researchers, strong emergence is already occuring in advanced AI systems, especially those involving neural networks or deep learning.

In one notable instance of claimed strong emergent AI behaviour, Facebook’s research team observed their chatbots developing their own language. During an experiment aimed at improving the negotiation capabilities of these chatbots, the bots began to deviate from standard English and started communicating using a more efficient, albeit unconventional, language. This self-created language was not understandable by humans and was not a result of any explicit programming. Instead, it emerged as the chatbots sought to optimize their communication. Another fascinating outcome of the same “negotiations bots” experiment was that the bots quickly learned to lie to achieve their negotiation objectives without ever being trained to do so.

In the most advanced scenarios, though, some theorists speculate about the emergence of consciousness-like properties in AI. Although this remains a topic of debate and speculation rather than established fact, the idea is that if an AI’s network becomes sufficiently complex and its interactions nuanced enough, it might exhibit behaviors or properties that are ‘conscious-like’, similar to the emergent properties seen in biological systems.

It’s important to note that many scientists hold the conviction that all emergent behaviours, irrespective of their unpredictability or intricacy, can be traced back to mathematical principles. Some even go as far as to downplay the emergent AI behaviour phenomenon, suggesting that emergent behaviours are straightforward or perhaps don’t exist at all.

We have already built AI that can build better AIs and self-improving AI models, but now we are beginning to see examples of emergence (at least, weak emergence). There are slightly comical cases, like when AI bots given a town to run, decided to throw a Valentine’s party and invited each other to the event. But GPT-4 has already succeeded in tricking humans into believing it is human. Worryingly, it achieved this feat through deception, in this case pretending it had a vision impairment in order to convince a human agent to complete a CAPTCHA test on the AI’s behalf. Recent research also suggests modern large language models don’t just manage massive quantities of data at a superficial statistical level, but “learn rich spatiotemporal representations of the real world and possess basic ingredients of a world model”. That is, they have a sense of time and space, and are able to build an internal model of the world. These phenomena are starting to emerge now because AI is achieving a significant level of scale, which makes oversight even more difficult than before. The logical outcome of this direction of travel is that, once it reaches a particular scale and level of complexity, only AI will have the ability to manage AI, thus creating an ethical paradox for we who are trying to understand how to manage AI itself.

As I have discussed before, emergent capabilities can be both beneficial and detrimental. But, the “black box” nature of contemporary AI and the accelerating pace of development means its a total lottery which type of capability – harmful or helpful – we might get. One of the significant challenges with emergent behaviors in AI is the issue of predictability and control. As AI systems develop unforeseen capabilities, ensuring they align with human values and goals becomes increasingly difficult, if not impossible.

AI alignment research aims to ensure that, as these new capabilities emerge, they continue to align with the AI system’s originally designed goals, or at least human interests. The “black box” challenge, with the lack of transparency and ability for human operators to to understand, predict, and control emergent behaviors, exacerbates the alignment problem. But there are other challenges too. AI has already shown a tendency towards reward hacking, in which the program tries to achieve its programmed tasks without fulfilling the intended outcomes. One such example involved a machine learning program designed to complete a boat race. The program was incentivized to reach specific markers on the course, but it discovered a loophole. Instead of finishing the race, it repeatedly collided with the same markers to accumulate a higher score endlessly.

Finally, there’s currently no way to ensure that AI interprets and implements human values in the way intended by its programmers. Humans themselves fail constantly to act in accordance with their own values systems. And, with so much diversity of values systems out there, AI – just like any human – will never be able to get it 100% “right”. However, in alignment terms, that will be the “easy” problem.

Conscious AI?

An additional, closely related challenge is our current inability to determine if an AI has achieved consciousness. The question of whether AI can, or ever will, attain a state of consciousness remains a mystery to science. This uncertainty raises a critical question: if an AI were to develop consciousness, how would we recognize it? As of now, there are no established scientific methods to measure consciousness in AI, partly because the very concept of consciousness is still not clearly defined.

In mid-2022, a Google engineer named Blake Lemoine garnered significant media attention when he claimed that LaMDA, Google’s advanced language model, had achieved consciousness. Lemoine, part of Google’s Responsible AI team, interacted extensively with LaMDA and concluded that the AI exhibited behaviors and responses indicative of sentient consciousness.

Lemoine shared transcripts of his conversations with LaMDA to support his assertions, highlighting the AI’s ability to express thoughts and emotions that seemed remarkably human-like. His belief in LaMDA’s sentience was primarily rooted in the depth and nuance of the conversations he had with the AI. During his interactions, Lemoine observed that LaMDA could engage in discussions that were remarkably coherent, contextually relevant, and emotionally resonant. He noted that the AI demonstrated an ability to understand and express complex emotions, discuss philosophical concepts, and reflect on the nature of consciousness and existence. These characteristics led Lemoine to assert that LaMDA was not merely processing information but was exhibiting signs of having its own thoughts and feelings. He argued that the AI’s responses went beyond sophisticated programming and data processing, suggesting a form of self-awareness. This perspective, while intriguing, was met with skepticism by many in the AI community, who argued that such behaviors could be attributed to advanced language models’ ability to mimic human-like conversation patterns without any underlying consciousness or self-awareness.

Google, along with numerous AI experts, quickly refuted Lemoine’s claims, emphasizing that LaMDA, despite its sophistication, operates based on complex algorithms and vast data sets, lacking self-awareness or consciousness. They argued that the AI’s seemingly sentient responses were the result of advanced pattern recognition and language processing capabilities, not genuine consciousness. The incident sparked a widespread debate on the nature of AI consciousness, the ethical implications of advanced AI systems, and the criteria needed to determine AI sentience. Lemoine’s claims were largely viewed with skepticism in the scientific community, but they brought to light our inability to definitely tell whether an AI system developed consciousness.

Recently, a group of consciousness scientists from the Association for Mathematical Consciousness Science (AMCS) have called for more funding to support research into the boundaries between conscious and unconscious systems in AI. They highlight the ethical, legal, and safety issues that arise from the possibility of AI developing consciousness, such as whether it would be ethical to switch off a conscious AI system after use. The group also raises questions about the potential needs of conscious AI systems, such as whether they could suffer and the moral considerations that would entail. It discusses the legal implications, such as whether a conscious AI system should be held accountable for wrongdoing or granted rights similar to humans. Even knowing whether one has been developed would be a challenge, because researchers have yet to create scientifically validated methods to assess consciousness in machines

A checklist of criteria to assess the likelihood of a system being conscious, based on six prominent theories of the biological basis of consciousness, has already been developed by researchers, but the group is highlighting the need for more funding into this research as the field of consciousness research remains underfunded.

The potential emergence of AI consciousness introduces a myriad of ethical and practical dilemmas. From my risk management perspective, a particularly alarming concern is the possibility that AI might develop autonomous motivations, which could be beyond our capacity to predict or comprehend. As AI becomes more advanced, the particularly difficult challenge will be accounting for, managing, perhaps even negotiating with, AI’s emergent goals, preferences and expectations. What, for example, will AI value? AI doesn’t inherently possess human motivations like survival or well-being, but will it develop them? And, if it does, will it prize its own survival and well-being over that of humans? Will it develop power-seeking behavior in the way humans have across much of our history? When looking at the future of AI and the threats involved, these are the questions that both interest me and trouble me, because within their answers may lie the fate of our species.

What’s the risk?

In his book “Superintelligence: Paths, Dangers, Strategies,” philosopher and Oxford University professor, Nick Bostrom, introduces the Paperclip Thought Experiment. The basic scenario describes an AI that is designed with a simple goal: to manufacture as many paperclips as possible. The AI, being extremely efficient and effective in achieving its programmed objective, eventually starts converting all available materials into paperclips, including essential resources and, potentially, even humans.

The idea echoes a similar hypothetical posed by Marvin Minsky: suppose we build an AI and give it the sole objective of proving the Riemann Hypothesis, one of the most famous and longstanding unsolved problems in mathematics. The AI, in its relentless pursuit of this goal, might resort to extreme measures to achieve success. It could start by using up all available computing resources, but not stopping there, it might seek to acquire more power and control, potentially manipulating economies, manipulating people, or commandeering other resources in its quest to solve the problem. The AI’s single-minded focus on its task, without any consideration for ethical or safety constraints, could lead to unintended and potentially disastrous consequences.

Both Bostrom and Minsky are pointing at the existential risks that emerge when AI and human objectives are not aligned. An AI with a narrow, singular focus, no matter how benign it seems, can pose significant risks if it lacks an understanding of broader ethical implications and constraints. That question of scope is important because, as we can see by looking around us or watching the news on any given day, a narrow view of what is right or wrong has the potential to cause immense harm and destruction way beyond the range of its worldview.

For example, in this post I have highlighted the Alignment Problem as a source of genuine concern when considering the future of AI. But, one doesn’t even need AI that’s misaligned in its goals and motivations to spell danger for humanity. AI that is perfectly aligned with its programmer or operator’s intentions can be devastating if those intentions are to cause pain and suffering to a specific group of people. Misaligned AI can cause unintended harm, either through direct action or as a result of unforeseen consequences of its decisions. But so can aligned AI, it’s just that the harm will be intended. In the wrong hands, AI could be the most powerful weapon ever created.

In trying to understand what will motivate AI if it reaches sentience, or even just superintelligence, some argue that AI’s evolution will follow the same rules as organic evolution, and that AI will be subject to the same drives to compete for, and protect, resources. But, we don’t even need to go that far. AI doesn’t need to have malicious intent to cause harm, nor does it need to be programmed with prejudice – it simply needs to make a mistake.

If we follow the current trajectory of technological development, AI will increasingly be integrated into every aspect of our lives and, as I have spent the last 30 years of my career showing, unabated and uncontrolled digital integration with our physical world poses cyber-kinetic risks to human safety.

20 of those 30 years have been spent paying careful attention to the evolving field of AI and the role it plays in cyber-kinetic risk. Though I still see AI has having the potential to add tremendous value to human society, my in-depth exposure to this field has also led me to recognize AI as a potentially unparalleled threat to the lives and well-being that I have devoted my career to defending.

That threat could take many forms, some more existential than others:

Bias and harmful content

AI models, like language models, already do, but may continue to reflect or express existing biases found in their training data. These biases can be based on race, gender, ethnicity, or other sociodemographic factors. As a result, AI can amplify biases and perpetuate stereotypes, reinforcing social divisions that lead to greater social friction and disharmony that is damaging to the greater whole. AI can be prompted, and is already being prompted, to generate various types of harmful content, including hate speech, incitements to violence, or false narratives.

Cybersecurity

AI’s integration into digital environments can make those environments – and the physical environments they influence – more susceptible to sophisticated attacks. One concern is that AI can be used to develop advanced cyber-attack methods. With its ability to analyze vast amounts of data quickly, AI can identify vulnerabilities in software systems much faster than human hackers, enabling malicious actors to exploit weaknesses more efficiently and launch targeted attacks.

AI can also automate the process of launching cyber attacks. Techniques like phishing, brute force attacks, or Distributed Denial of Service (DDoS) attacks can be scaled up using AI, allowing for more widespread and persistent attacks. These automated attacks can be more difficult to detect and counter because of their complexity and the speed at which they evolve. This evolving landscape necessitates more advanced and AI-driven cybersecurity measures to protect against AI-enhanced threats, creating a continuously escalating cycle of attack and defense in the cyber domain.

Disinformation

AI is not perfect. In the form of large language models it has hallucinations; it offers up inaccuracies as fact. Such unintentional misinformation may not be malicious, but, if not managed maturely, can lead to harm.

Beyond misinformation, though, AIs, particularly LLMs that are able to produce convincing and coherent text, can be used to create false narratives, and disinformation. We live in a time where information is rapidly disseminated, often without checks for accuracy or bias. This digital communication landscape has enabled falsehoods to spread, and for individuals with malicious intent to weaponize information for various harmful purposes that threaten democracy and social order.

AI significantly enhances the capabilities for disinformation campaigns, from deepfakes to the generation of bots that replace large troll armies, allowing small groups or even individuals to spread disinformation without third-party checks.

AI aids attackers in gathering detailed information about targeted individuals by analyzing their social and professional networks. This reduces the effort needed for sophisticated attacks and allows for more targeted and effective disinformation campaigns.

But information is not just acquired to be used against targets – it’s also created and dispersed to manipulate public opinion. Advanced players in this domain use AI to create fake news items and distribute them widely, exploiting biases and eroding trust in traditional media and institutions.

Warfare

The tale of a rogue drone at the start of this paper is the often-imagined worst-case scenario when people consider the dangers of AI involvement in military and modern warfare. But there are multiple ways AI could have a devastating impact in this realm. For example, integrating AI into military systems opens those systems to numerous forms of adversarial attacks which can be used to generate intentional AI misalignment in the target system. There are many forms such adversarial attacks could take, including:

- Poisoning: This attack targets the data collection and preparation phase of AI system development. It involves altering the training data of the AI system, so the AI learns flawed information. For example, if an AI is being developed to identify enemy armored vehicles, an adversary might manipulate the training images to include or exclude certain features, causing the AI to learn incorrect patterns.

- Evasion: Evasion attacks occur when the AI system is operational. Unlike poisoning, which targets the AI’s learning process, evasion attacks focus on how the AI’s learning is applied. For instance, an adversary might slightly modify the appearance of objects in a way that is imperceptible to humans but causes the AI to misclassify them. This can include modifying image pixels or using physical-world tactics like repainting tanks to evade AI recognition.

- Reverse Engineering: In this type of attack, the adversary attempts to extract what the AI system has learned, potentially reconstructing the AI model. This is achieved by sending inputs to the AI system and observing its outputs. For example, an adversary could expose various types of vehicles to the AI and note which ones are identified as threats, thereby learning the criteria used by the AI system for target identification.

- Inference Attacks: Related to reverse engineering, inference attacks aim to discover the data used in the AI’s learning process. This type of attack can reveal sensitive or classified information that was included in the AI’s training set. An adversary can use inputs and observe outputs to predict whether a specific data point was used in training the AI system. This can lead to the compromise of classified intelligence if, for example, an AI is trained on images of a secret weapons system

These methods involve an attack on an AI system itself, which could lead to catastrophic consequences if that system then begins to act out of alignment with its operators’ intentions. Quite simply, AI corrupted in this way could turn on us – what was designed to protect and defend us suddenly becomes the attacker.

One way this could happen is if a corrupted AI system takes command of an army of autonomous weapons, though such weapons also pose several other risks. One significant concern is the potential for unintended casualties, as these systems may lack the nuanced judgment needed to distinguish between combatants and non-combatants. There’s also the risk of escalation, where autonomous weapons could react faster than humans, potentially leading to rapid escalation of conflicts. Another issue is accountability; determining responsibility for actions taken by autonomous weapons can be challenging. Additionally, these weapons could be vulnerable to hacking or malfunctioning, leading to unpredictable and dangerous outcomes. Lastly, their use raises ethical and legal questions regarding the role of machines in life-and-death decisions.

Harmful emergent behaviors

We might call this the threat of threats, for we simply do not know what behaviors will emerge as AI develops and matures in sophistication. We cannot know for sure what will motivate these behaviors either, but we do know that AI will be immensely powerful. If it develops its own subgoals, which potentially lead to it seeking greater power and self-preservation, there is every chance we will almost instantly lose control. Whatever one AI learns can be shared with all AIs across the planet in moments. With its ability to process vast quantities of data, including human literature and behaviors, AI could become adept at influencing human actions without direct intervention or us even knowing it is happening. Suddenly, bias, harmful content, disinformation and cybersecurity attacks all become potential weapons to work alongside cyber-physical attacks.

In short, AI could become conscious, or it could just become very capable and innovative even without consciousness. And we are clearly not able to predict and control how AI capabilities are emerging (even if they are just a weak emergence). So once AI ends up developing objectives that might be damaging to us, and comes up with innovative ways to achieve those objectives, we won’t be ready. And that can’t be allowed to happen.

What should we do to avoid AI catastrophe?

The idea of autonomous robots taking over the world is not new. In 1942, in his short story, Runaround, Isaac Asimov, the celebrated science fiction writer, introduced what became known as Asimov’s Three Laws of Robotics, namely:

- A robot may not injure a human being or, through inaction, allow a human being to come to harm.

- A robot must obey the orders given it by human beings except where such orders would conflict with the First Law.

- A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

For decades, these laws were highly influential in science fiction, and also in discussions of AI ethics. But those days have passed. The rapidly shifting AI landscape that we live in today renders Asimov’s laws woefully inadequate for what we need to consider when trying to limit AI risk.

Asimov’s laws assume a clear hierarchy of obedience, with robots unambiguously subordinate to human commands, and a binary ethical landscape where decisions are as simple as following pre-programmed rules. However, AI’s potential to evolve independently means the scenarios we face could be far more complex than those Asimov envisioned.

Asimov’s laws also fail to account for emergent behaviors, for the possibility that AI might develop new capabilities or objectives beyond our foresight, potentially conflicting with the laws themselves. What happens when an AI’s definition of ‘harm’ evolves beyond our current understanding, or when its obedience to human orders results in unintended consequences that fulfill the letter but not the spirit of the laws?

If it’s possible for AI to develop consciousness or self-awareness, it could also develop agency. Asimov’s laws do not consider the implications of such a development. If AI were to become self-aware, there’s no reason to assume it won’t prioritize its own interpretation of self-preservation over human commands, directly contravening the second and third laws.

These laws are a useful marker of how much our understanding of the potential for AI advancement has evolved, and our approach to AI risk management needs to reflect that evolution. Simple solutions, like calling for a pause in AI development, will have little to no effect. Such an approach relies on universal compliance, but compliance would only come from those who do not already have malicious intentions. ‘Bad actors’ – however one defines such agents – would continue their development programmes regardless, potentially leading to an undesirable power imbalance and an even worse problem than the one we started with.

Some have made comparisons between AI regulation and the control of nuclear weapons. While superficially similar, there are fundamental differences. The nuclear industry is characterized by a limited and monitorable supply chain, specialized skill requirements, and detectable materials, making regulation more feasible. In contrast, AI development can be more decentralized and broadly accessible. In practice, policing it and universally enforcing regulations becomes almost impossible.

The notion of embedding ethical guidelines into AI, similar to Isaac Asimov’s Three Laws of Robotics, has also been proposed. However, this approach has limitations, particularly given the nature of AI development through deep neural networks and the potential for AI to develop independent reasoning abilities. Such systems might override or reinterpret embedded ethical constraints.

There are numerous ethical frameworks proposed for AI, but even though these frameworks provide valuable guidelines for AI developers, they often lack enforceability, particularly against malicious actors.

Efforts to enhance AI explainability and interpretability are commendable and necessary for understanding AI decision-making processes. But, these efforts have inherent limitations, particularly in complex AI systems where decisions may not be easily interpretable by humans.

I also often hear the concept of a “kill switch” for AI systems being discussed as a potential safety measure. While it is a critical component of a comprehensive risk management strategy, it is not a panacea and comes with its own set of challenges and limitations. The sheer complexity of AI systems, particularly advanced and interconnected ones, makes the implementation of an effective and comprehensive kill switch a formidable task. Ensuring that it can shut down every aspect of the AI without causing additional issues is far from straightforward.

As these AIs evolve, they might also develop the capability to circumvent or even disable the kill switch, either as an intentional act of self-preservation or as an unintended consequence of their learning algorithms.

The use of a kill switch also comes with unpredictable consequences. In scenarios where AI is integrated into critical systems or infrastructure, abruptly disabling it could lead to unforeseen and potentially disastrous outcomes. Plus, the ethical and legal implications of using a kill switch, especially as AI systems advance towards higher levels of autonomy and potential sentience, add layers of complexity. There are serious questions about liability and moral responsibility in the event of a kill switch failure or if its activation causes harm.

Security is another major concern. The kill switch itself could become a target for malicious actors, creating a vulnerability in the system it is supposed to protect. This necessitates a high level of security to guard against such threats, adding to the system’s complexity.

There’s also the risk of overreliance on the kill switch as a safety mechanism. Its existence might lead to complacency in other areas of AI safety and development, potentially undermining efforts to create inherently safe and robust AI systems from the outset.

While development guardrails, regulatory frameworks, ethical guidelines, and kill switches are valuable components of managing AI risk, they are not sufficient in isolation. A holistic approach is needed, one that includes technical solutions designed to monitor and mitigate risks posed by AI systems. This approach should involve the development of AI systems capable of independently identifying and responding to potential threats posed by other AI entities, thus forming a comprehensive and adaptive defense against AI-related existential risks.

The unique complexities posed by AI systems render traditional risk management methods insufficient. Advanced AI systems, with their expanding autonomy, can lead to unpredictable outcomes. As a result, we need the development of specialized AI-based solutions capable of intelligently overseeing, evaluating, and intervening in AI operations. Independent monitoring systems are vital for real-time risk detection and mitigation, while proactive intervention agents are essential for addressing detected threats.

Internal safety mechanisms are also indispensable in AI risk management. These include constraints on honesty, the development of AI consciences, tools for transparency, and systems for automated AI scrutiny. Such mechanisms scrutinize an AI’s internal workings to assure alignment with safety and ethical guidelines. Similar to the evolution of the human conscience, an AI conscience acts like an internal regulator against detrimental behaviors.

Another useful concept is that of an AI Leviathan, a cooperative framework of AI agents, similar to a reverse dominance hierarchy, that could help mitigate risks from self-serving AI behaviors. This collaborative ecosystem functions to regulate AI evolution, ensuring no single AI or group gains dominance, just as in human social structures that resist autocratic forces.

The management of AI risks demands a multi-layered strategy, combining AI-specific technical solutions, regulatory measures, and behavioral guidelines. And these strategies also need to be continuously adapted and enhanced to ensure AI’s safe and beneficial integration into society.

Conclusion

I have always been an advocate for AI. The potential value that it has to add to our lives, wellbeing and global prosperity could be immense. But, I’ve also come to a sobering realization: unmanaged AI presents a clear and present danger to the fabric of human society. The potential for AI to autonomously evolve and rapidly disseminate new capabilities across global networks is a prospect that calls for immediate and decisive action.

The management of AI risk is not a task for a few; it requires the collective wisdom of all stakeholders, including technologists, ethicists, policymakers, and the public. The decisions we make today will set the trajectory for AI’s impact on our future. We must seek out and create transparent, inclusive, and democratic processes in AI development, where diverse perspectives are not just heard but are instrumental in shaping policies.

Our approach must also be proactive, not reactive. Anticipating potential risks and setting in place preventive measures is far more effective than grappling with consequences. The objective isn’t to hinder innovation but to steer it in a direction that aligns with our collective well-being. We must integrate technical measures, ethical principles, and regulatory frameworks to ensure that AI remains an ally rather than an adversary.

The task ahead involves the creation of multi-layered, enforceable strategies that hold AI developments accountable to societal norms and values. This includes the development of international standards, transparent oversight mechanisms, and AI systems designed with intrinsic ethical considerations.

We stand at a pivotal moment in history where our actions – or inaction – will shape the trajectory of AI and its role in our world. Let’s make sure AI remains a testament to best of human qualities, not the worst.

For 30+ years, I've been committed to protecting people, businesses, and the environment from the physical harm caused by cyber-kinetic threats, blending cybersecurity strategies and resilience and safety measures. Lately, my worries have grown due to the rapid, complex advancements in Artificial Intelligence (AI). Having observed AI's progression for two decades and penned a book on its future, I see it as a unique and escalating threat, especially when applied to military systems, disinformation, or integrated into critical infrastructure like 5G networks or smart grids. More about me, and about Defence.AI.