The Dual Risks of AI Autonomous Robots: Uncontrollable AI Meets Cyber-Kinetic Risks

The automotive industry has revolutionized manufacturing twice.

The first time was in 1913 when Henry Ford introduced a moving assembly line at his Highland Park plant in Michigan. The innovation changed the production process forever, dramatically increasing efficiency, reducing the time it took to build a car, and significantly lowering the cost of the Model T, thereby kickstarting the world’s love affair with cars. The success of this system not only transformed the automotive industry but also had a profound impact on manufacturing worldwide, launching the age of mass production.

The second time was about 50 years later, when General Motors installed Unimate, the world’s first industrial robot, on its assembly line at the Inland Fisher Guide Plant, New Jersey. Initially used to handle hot pieces of metal from die casting machines, Unimate was a large robotic arm designed to take over tasks that were particularly dangerous for humans. But its impact went way beyond improvements to safety and efficiency. Integrating robotics into manufacturing was the beginning of a new era in industrial production.

A few decades on and more than one million robots work in the automotive industry alone, with many more underpinning operations and production in varied sectors, from healthcare to logistics to entertainment. There are approximately 3.9 million operational robots in service around the world today, but this is more than a numbers game. What is most notable about the times we are living through right now is not how many robots there are, but what robots are becoming.

The global robotics market, valued at $63 billion in 2022, is projected to reach $218 billion by 2030, with a CAGR of 17%. This exponential growth is propelled not just by advancements in hardware but, more critically, by the integration of sophisticated AI technologies that promise to redefine the boundaries of what robots can achieve.

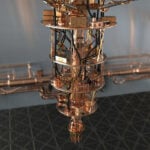

The rise of generative AI has been nothing short of revolutionary, largely driven by rapid advancements in Large Language Models (LLMs) like OpenAI’s GPT-4 and Google DeepMind’s Gemini. But, the transition of AI and LLMs from purely digital realms into the physical world through robotics is perhaps the most significant step yet. Roboticists are employing similar approaches to those used in creating general-purpose AI models to build general-purpose robots that have an expanded range of capability stretching beyond repetitive tasks.

Robots are also increasingly capable of understanding natural language instructions, learning, adapting, and making decisions in real-time. Thanks to the infusion of AI, robots are no longer confined to the monotonous tasks of a production line but are stepping into roles that require interaction and adaptability, from customer service to caregiving.

At the moment, the biggest buzz in the market is reserved for AI-driven humanoid robots. These robots, with their bipedal locomotion and human-like dexterity, permit integration into environments traditionally reserved for humans, and are poised to revolutionize sectors such as healthcare, logistics, manufacturing, and service industries. Goldman Sachs sees the market for humanoids growing to $38 billion by 2035, with 250 000 units expected to be sold by 2030; the Chinese Ministry of Industry and Information Technology is committed to mass-producing humanoids by 2025.

But, even as we marvel at these advancements, the flip side of this technological coin reveals deep-seated concerns. The proliferation of AI and robotics in our lives introduces complex cybersecurity challenges and the specter of cyber-kinetic risks. AI is already being seen to have a negative impact on individuals and society through phenomena like deep fakes and disinformation, but the most genuine risks to humanity – the existential risks to humanity – are always going to be cyber-physical. Robots are the epitome of cyber-physical systems (CPS), and as we make them more autonomous, more human-like, and integrate them more and more into our daily lives, the greater the potential for misuse or malfunction with serious real-world implications.

While the vision of a future where humanoids and AI-powered machines seamlessly fit into our daily lives is compelling, it brings to the fore crucial dialogues on safety. As we embrace the marvels of AI and robotics, ensuring these tools benefit humanity while mitigating their risks becomes paramount. This is something we all need to pay attention to, but in countries like the Kingdom of Saudi Arabia (KSA), which are seeing radical investment in leading-edge technological growth, it’s a crucial area of focus.

AI and Robotics: A New Frontier

The current explosion in AI-driven robotics is largely fueled by significant enhancements in machine learning algorithms and visual processing. Google’s DeepMind, for example, has introduced AutoRT, utilizing large foundational models to improve robots’ understanding of human commands and intentions. This system leverages Visual Language Models (VLMs) for better situational awareness and orchestrates a fleet of robots to perform a variety of tasks with enhanced efficiency. DeepMind’s RT-Trajectory, which employs video input for robotic learning, significantly boosts the success rate of robot training by utilizing rich motion information present in datasets.

Sensor technology, another cornerstone of modern robotics, has seen exponential growth. Today’s robots are equipped with advanced sensors that enable them to perceive their surroundings with unprecedented accuracy. These sensors not only help robots navigate complex environments but also allow them to interact safely and effectively with humans and other objects. The integration of sophisticated vision systems, tactile sensors, and environmental awareness technologies has made robots more autonomous and capable of performing tasks in unstructured settings.

On the software front, robotics developers are creating more intuitive and flexible platforms, allowing for the rapid deployment and adaptation of robots in various industries. The rise of digital twin technology, for example, enables the virtual simulation of physical robots, allowing engineers to optimize designs and operational strategies before physical deployment. This blurs the lines between digital and physical realms, accelerating innovation and reducing time-to-market for new robotic applications.

The landscape of AI robotics is being shaped by an array of startups and tech giants venturing into the development of general-purpose humanoid robots. Heavily-funded Chinese outfit, UBTECH Robotics, has already deployed its Walker S robot on car manufacturer NIO’s EV assembly line, marking a significant step in automation.

Figure AI, a Sunnyvale, California-based startup, has also made notable progress, securing a massive $675 million in Series B funding, recently valuing the company at $2.6 billion. A crucial collaboration with OpenAI seeks to enhance robot capabilities in natural language processing and task execution, marking a major step towards creating more natural methods of communication between robots and humans. In what’s shaping up to be the next tech gold rush, the company has attracted a consortium of high-profile investors, including Microsoft, OpenAI Startup Fund, NVIDIA, Amazon Industrial Innovation Fund, and Jeff Bezos, and recently announced a commercial agreement with BMW Manufacturing to bring general purpose robots into automotive production.

Sanctuary AI has also been making headlines with its advanced AI-powered humanoid robot, Phoenix™, which has been designed to perform a wide variety of tasks alongside humans in work environments. The company recently garnered a strategic investment from Accenture, highlighting the potential of Phoenix™ in industries facing labor shortages, such as post and parcel, manufacturing, retail, and logistics warehousing. Phoenix™, powered by Sanctuary AI’s AI control system Carbon™, mimics human brain subsystems and translates natural language into action, demonstrating capabilities such as fine manipulation and task execution that rival human dexterity.

Meanwhile, Amazon-backed Agility Robotics is preparing to produce 10,000 units a year of its humanoid robot, Digit, and Tesla continues development of its Optimus humanoid bot, Gen 2 of which can handle eggs without cracking them and, apparently, dance.

Despite this rapid progress, true general-purpose robots – robots that can navigate unstructured environments and solve problems in real time – may still be a way off. Getting this right requires more than sophisticated machine learning or AI, it requires huge advances in multiple fields, including materials science and control systems. Then there are the commercial challenges of building user trust and achieving competitive pricing. A versatile, general-purpose domestic robot seems even further away, hindered by affordability, ethical concerns, and user acceptance.

Nevertheless, the direction of travel is clear, and the investment is there. AI robots are here to stay and they will only become more impressive. While this is an exciting prospect that has the potential to reshape the world we live in (imagine: what do society and the economy look like when we don’t need human labor?), we will only reap the fruits of transformation if we pay attention to safety and security.

Merging Fears: Robots, Uncontrollable AI and Cyber-Kinetic Catastrophe

Many people are afraid of ‘Rogue AI’ – an autonomous, ‘sentient’ (whatever that means) system that breaks free of its human overlords and ultimately turns on them. I have shared my own concerns on this subject before, though admittedly with a bit less melodrama, but what most people are missing is that they are not actually afraid of AI. They are afraid of the cyber-physical consequences of AI. AI in and of itself could certainly cause a fair degree of chaos by sabotaging and corrupting data and information, but it is only through engaging cyber-physical systems (CPS) that AI can threaten us physically and wreak the kind of havoc imagined in dystopian sci-fi like Terminator, The Matrix and I,Robot.

Cyber-physical systems represent a blending of computing, networking, and physical processes. Embedded computers and networks monitor and control the physical processes, usually with feedback loops where those processes affect computations and vice versa. The vast predominance of systems in this category form part of a massive internet of things (mIoT), and include systems ranging from autonomous vehicles, medical monitoring, and process control systems, to smart grids, and robotics. If you imagine this mIoT as a web that extends into and connects almost every corner of modern human experience, you get a sense of the complexity and importance of CPS in modern infrastructure and services, and begin to appreciate what makes them significant targets for cyber attacks.

The threat posed by cyber attacks on CPS is multifaceted and profound due to the direct impact on physical safety and human life. Unlike traditional cyber attacks that might target data theft or financial loss, attacks on CPS have the potential to cause physical destruction and harm, especially since many are relied on to ensure the smooth operation of critical infrastructure. For instance, a malicious actor could hack into the control system of a chemical plant, manipulating the controls to cause spills or explosions, or into a smart grid to cause widespread power outages, directly impacting public safety and health.

In one way, robots represent a more direct and tangible cyber-physical risk. The trajectory of robot development means we are bringing robots deeper and deeper into the intimacy of human experience, closer and closer physically and socially. One of the biggest trends over the past few years has been the growth in cobots (collaborative robots) – robots intended to work side-by-side with humans. Unlike traditional industrial robots, which are often isolated from humans for safety reasons, cobots are designed with advanced sensors, software, and safety mechanisms that allow them to work side-by-side with human workers safely. This collaboration can enhance productivity, efficiency, and flexibility in various tasks ranging from manufacturing and assembly to packaging and material handling, provided, of course, the underlying software and network integrity managing the cobot remains secure.

Robots are also becoming more pervasive, moving out of ‘traditional’ spheres like manufacturing and logistics into novel public and private roles. Autonomous Mobile Robots (AMR) are already stepping (or rolling) out of warehouses and into airports and farms. And, as AI improves communicative ability, robots will increasingly be found in environments like restaurants, hotels, hospitals, transport stations and offices, where they can engage with people on management’s behalf.

These trends will be most amplified in smart cities and related ecosystems. In Kingdom of Saudi Arabia’s (KSA) NEOM development, for example, advanced robotics will play a crucial role in fulfilling Oxagon’s promise of the world’s first fully integrated supply chain operating on a single cyber-physical meta-network. Robots also form a crucial part of the urban and residential vision for NEOM, as human living is made easier and more convenient by robotic assistance.

All of this points to an increase in depth and scale. Robots are being woven more intricately into the cyber-physical fabric of daily life, which is amazing. Until it’s not. Malicious cyber-physical attacks can compromise robots in multiple ways, including causing physical damage to the robots themselves and to their surrounding environment, altering their networking functions, and compromising their operating systems.

Physical Attacks

Physical layer vulnerabilities include hardware-based threats to cyber-physical systems, where attackers could exploit various components leading to diverse and complex security challenges. Hardware attacks might target micro-controllers of robotic vehicles, influencing motor performance or causing direct physical damage through erroneous commands.

The repercussions of physical attacks include physical damages to the robots and surrounding environments, and in severe cases, causing injuries or fatalities to humans. Robots may crash, move erratically, halt, or otherwise compromise their intended behavior.

Networking Attacks

Networking attacks involve malicious actions conducted remotely, without needing physical access to the robots. These include false data injection, scaling attacks, stealthy attacks on sensors, Denial of Service (DoS), spoofing, and signal interference – broader IEMI attacks.

Networking attacks can lead to financial and reputational damage, unauthorized data access, theft of sensitive information, and manipulation of robot actions. Such breaches may compromise the confidentiality, integrity, and availability of robotic systems; unauthorized access opens the pathway to physical harm caused by manipulated actions of the robots.

Operating System Attacks

An increasing risk as the robotics industry shifts focus from hardware to software and the integration of GenAI applications, these attacks exploit vulnerabilities in the operating systems or support software of robots, including robot operating systems (ROS). Despite its name, ROS is not a traditional operating system (OS) but rather an open-source framework or middleware that provides a collection of tools, libraries, and conventions designed to simplify the task of creating complex and robust robot behavior across a wide variety of robotic platforms.

Common threats involve public accessibility of ROS nodes, weak authentication and authorization mechanisms, and susceptible communication protocols. The open-source nature of ROS also makes them susceptible to the same vulnerabilities that beleaguer open-source more generally.

Operating system infractions can lead to security breaches, including leakage of sensitive information, unauthorized system access, and manipulation of robot functionalities. Attackers may gain control over robotic operations, alter system behaviors, and execute unauthorized commands, leading to data theft, operational disruptions, and in severe cases, endangering human safety.

All these risks are heightened as we begin to combine robotics with AI. As I explore in detail here, the risks inherent in AI are real, and they increase the closer we get to ‘emergence’. In the same way human bodies are vehicles for the expression of human consciousness, robots stand to become the physical manifestations of AI, whether it is narrow, general or super. Google’s attempt at a “Robotic Constitution” may be a more contemporary upgrade to Asimov’s Three Laws of Robotics, but it’s still insufficient to guarantee human safety in the case that AI ‘wakes up’.

My Concern

For nearly three decades, I have dedicated myself to the study and practice of cyber-kinetic risk management—managing the dangers of cyber attacks aimed at cyber-physical systems, which could potentially harm lives, well-being, or the environment. For an introduction into this convergence of cybersecurity and safety, see my article “Digital Actions, Lethal Consequences: A Cyber-Kinetic Risk Primer“. It has become increasingly clear to me that our preparedness for handling these risks is alarmingly inadequate.

At the same time, I’ve been involved in AI risk management for over 15 years, with a significant portion of my work focused on the dangers associated with AI-driven autonomous weaponry. I’ve been writing more about it on my website Defence.AI. We are even worse at understanding and managing AI risks. I summarized my views in “Marin’s Statement on AI Risk.”

I am convinced that we are not yet ready to effectively integrate and manage these converging risks. Much more work is needed before we can confidently relinquish control of a most quintessential cyber-physical system, one that we have not yet mastered securing against cyber threats, to an AI whose behavior we have yet to learn how to govern with certainty.

Kingdom of Saudi Arabia View: Taking the Necessary Precautions

When Muhammad, Saudi Arabia’s first male humanoid robot was unveiled earlier this year at DeepFest in Riyadh, he made headlines when he seemed to inappropriately touch a female reporter during a presentation. The act was undoubtedly a behavioral aberration, the kind that can be expected from any early gen robot and the reason Muhammad’s creators warned the public not to stand too close to ‘him’. Yet, the incident was a pertinent reminder of the future reality of human-robotic interactions, especially in KSA where Vision 2030 is rapidly moving towards realization. When robots are close enough to touch and be touched, they are also close enough for many forms of physical expression – ensuring those expressions are of a non-threatening kind will require vigilance and ongoing cybersecurity development, especially as we begin to integrate AI.

Recent news reports suggest KSA is making plans to build a fund of about $40 billion to invest in artificial intelligence, a move which would make it the world’s largest investor in AI technologies. This is exciting news, and confirms the Saudi government’s commitment to the digitally-integrated future being pursued in Vision 2030. But there are no guarantees of AI’s safety, and as KSA moves towards greater assimilation of AI and robotics, it’s crucial that decision-makers maintain a focus on cyber-physical security.

In robotics in general, this involves a layered approach that includes improvements in encryption, robust authorization/authentication protocols, enhanced physical security measures, enhanced network defense and operating system protection. When we add in AI security, the complexity of the situation is multiplied. The Kingdom has shown great leadership in its thinking on this subject, but needs to continue addressing the specific concerns of an AI-driven cyber-physical landscape if it is to continue the positive unfolding of this evolutionary tale.

For 30+ years, I've been committed to protecting people, businesses, and the environment from the physical harm caused by cyber-kinetic threats, blending cybersecurity strategies and resilience and safety measures. Lately, my worries have grown due to the rapid, complex advancements in Artificial Intelligence (AI). Having observed AI's progression for two decades and penned a book on its future, I see it as a unique and escalating threat, especially when applied to military systems, disinformation, or integrated into critical infrastructure like 5G networks or smart grids. More about me, and about Defence.AI.