The Urgent Need for Research on AI Consciousness: A Call from Scientists

A group of leading consciousness scientists from the Association for Mathematical Consciousness Science (AMCS) is voicing a pressing need for more research into the possibility of AI systems becoming conscious, highlighting the profound ethical, legal, and safety implications the question holds.

The AMCS, a coalition of experts in the field of consciousness, recently addressed the United Nations, emphasizing the urgent need for increased funding and research into the boundaries between conscious and unconscious AI systems. Their concerns are not just theoretical; they are rooted in the rapid advancements in AI, including the pursuit of artificial general intelligence (AGI) by companies like OpenAI. AGI aims to create AI systems capable of understanding, learning, and applying knowledge in ways similar to human intelligence.

Despite the high-profile discussions and summits on AI safety, the specific issue of AI consciousness has been notably absent. This oversight could have significant ethical and practical consequences.

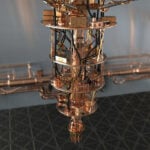

One of the major challenges in this field is the lack of scientifically validated methods to assess consciousness in machines. The uncertainty surrounding AI consciousness is a cause for concern, especially given the pace of AI development. This uncertainty extends to both the possibility of AI systems becoming conscious and the difficulty in recognizing such a development.

The ethical and legal implications of AI consciousness are profound. If AI systems were to become conscious, it raises questions about their treatment, rights, and the moral responsibilities of humans towards them. For instance, would it be ethical to deactivate a conscious AI system? Could such systems experience suffering? These questions extend into legal territory, pondering whether a conscious AI system could be held accountable for its actions or if it should be granted rights akin to those of humans.

As AI systems become more advanced and human-like in their interactions, the public’s understanding and perception of AI consciousness become increasingly important. Without proper scientific analysis and education, people might prematurely conclude that AI systems are conscious or, conversely, dismiss the possibility altogether. This gap in understanding underscores the need for scientists to be able to offer informed guidance to the public.

For more information see: Association for Mathematical Consciousness Science (AMCS)

For 30+ years, I've been committed to protecting people, businesses, and the environment from the physical harm caused by cyber-kinetic threats, blending cybersecurity strategies and resilience and safety measures. Lately, my worries have grown due to the rapid, complex advancements in Artificial Intelligence (AI). Having observed AI's progression for two decades and penned a book on its future, I see it as a unique and escalating threat, especially when applied to military systems, disinformation, or integrated into critical infrastructure like 5G networks or smart grids. More about me, and about Defence.AI.