How to Defend Neural Networks from Neural Trojan Attacks

Table of Contents

Neural networks, inspired by the human brain, play a pivotal role in modern technology, powering applications like voice recognition and medical diagnosis. However, their complexity makes them vulnerable to cybersecurity threats, specifically Trojan attacks, which can manipulate them to make incorrect decisions [1]. Given their increasing prevalence in systems that affect our daily lives, from smartphones to healthcare, it’s crucial to understand the importance of securing these advanced technologies against such vulnerabilities.

The Trojan Threat in Neural Networks

What is a Trojan Attack?

In the context of computer security, a “Trojan attack” refers to malicious software (often called “malware“) that disguises itself as something benign or trustworthy to gain access to a system. Once inside, it can unleash harmful operations. Named after the ancient Greek story of the Trojan Horse, where soldiers hid inside a wooden horse to infiltrate Troy, a Trojan attack similarly deceives systems or users into letting it through the gates.

What are Neural Trojans?

Neural networks learn from data. They are trained on large datasets to recognize patterns or make decisions. A Trojan attack in a neural network typically involves injecting malicious data into this training dataset. This ‘poisoned’ data is crafted in such a way that the neural network begins to associate it with a certain output, creating a hidden vulnerability. The neural network will behave normally on most inputs. However, when inputs with specific triggers are entered, it can cause the neural network to behave dangerously, unpredictably, or make incorrect decisions, often without any noticeable signs of tampering.

In other words, an attacker might add a specific ‘trigger’ to input data, such as a particular pattern in an image or a specific sequence of words in a text. When the neural network later encounters this trigger, it misbehaves in a way that benefits the attacker, like, for example, misidentifying a stop sign as a speed limit sign [2] in a self-driving car when a small sticker is placed on a stop sign.

Neural Trojans are a subset of a broader category of ML Backdoor attacks.

Examples

Healthcare Systems: Medical imaging techniques like X-rays, MRI scans, and CT scans increasingly rely on machine learning algorithms for automated diagnosis. An attacker could introduce a subtle but malicious alteration into an image that a doctor would likely overlook, but the machine would interpret as a particular condition. This could lead to life-threatening situations like misdiagnosis and the subsequent application of incorrect treatments. For example, imagine a scenario where a Trojan attack leads a machine to misdiagnose a benign tumor as malignant, leading to unnecessary and harmful treatments for the patient.

Personal Assistants: Smart home devices like Amazon’s Alexa, Google Home, and Apple’s Siri have become integrated into many households. These devices use neural networks to understand and process voice commands. A Trojan attack could change the behavior of these virtual assistants to convert them into surveillance devices, listening in on private conversations and sending the data back to attackers. Alternatively, Trojans could manipulate the assistant to execute harmful tasks, such as unlocking smart doors without authentication or making unauthorized purchases.

Automotive Industry: Self-driving cars are inching closer to becoming a daily reality, and their operation depends heavily on neural networks to interpret data from sensors, cameras, and radars. Although there are no known instances of Trojan attacks causing real-world accidents, security experts have conducted simulations that show how easily these attacks could manipulate a car’s decision-making. For instance, a Trojan could make a vehicle interpret a stop sign as a speed limit sign [1], potentially causing accidents at intersections. This is just one example among the growing number of A-driven automation of cyber-physical systems that can cause cyber-kinetic impacts if exploited. The stakes are extremely high, given the life-or-death nature.

Financial Sector: Financial firms use machine learning algorithms to sift through enormous amounts of transaction data to detect fraudulent activities. A Trojan attack could inject malicious triggers into the training data, causing the algorithm to intentionally overlook certain types of unusual but fraudulent transactions. This could allow criminals to siphon off large sums of money over time without detection. For example, a compromised algorithm might ignore wire transfers below a certain amount, allowing attackers to perform multiple low-value transactions that collectively result in significant financial losses.

Why Neural Networks are Vulnerable?

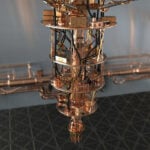

Neural networks are susceptible to Trojan attacks primarily because of their complexity and the way they learn from data. Much like the human brain, which has various regions responsible for different functions, a neural network is composed of layers of interconnected nodes that process and transmit information. During the training phase, where a neural network learns to recognize patterns from a dataset, inserting malicious data can be likened to a person adopting a certain misconception” or “preconceived notion” and continues to believe and act on that information. Just as a person may not be impacted by the misconception throughout most of their lives, the neural network may function normally in most cases but act maliciously when triggered by a specific input, akin to making a wrong decision based on a misconception learned long time ago.

This vulnerability arises because neural networks are not inherently designed to verify the integrity of the data they are trained on or the commands they receive. They function on the principle of “garbage in, garbage out,” meaning that if they are trained or manipulated with malicious data, the output will also be compromised. In essence, the very adaptability and learning capabilities that make neural networks so powerful also make them susceptible to hidden threats like Trojan attacks.

To further complicate the security, neural networks are typically black boxes and we don’t know how they were trained, preventing us from understanding how exactly the malicious functionality was embedded.

The black-box nature also prevents us to exhaustively test their behaviour prior to production. For example, in a visual recognition model, we can verify that a set of test images are correctly identified, but what about untested images? We cannot test all possible inputs, and we have no guarantees that the model would not behave in a particularly malicious way given a specific untested input.

We are also not able to use the established cybersecurity controls and tools such as for example threat intelligence and intelligence sharing. Putting together a database of known neural Trojans is impossible, primarily because each neural network and each Trojan are unique. Triggers can take on arbitrary shapes, and can also be designed to evade detection.

The challenge is further intensified by our limited understanding of how the model exactly stores the learned information, or how to codify the correct information in instances with such a large number of parameters, making it difficult to devise heuristic-based methods to detect hidden anomalies. As machine learning models become more readily available, the processes for training and deploying them are becoming less transparent and more distributed across a growing number of producers, amplifying these security concerns.

Possible Attack Vectors

There are multiple ways to train a hidden, unexpected classification behaviour into a deep neural network.

Model Repository Manipulation

Model repositories such as Model Zoo, PyTorch Hub, or Hugging Face are platforms or databases where pre-trained machine learning models are stored and shared. These repositories allow researchers and developers to access and use state-of-the-art models without having to train them from scratch. However, the open nature of many model repositories makes them susceptible to various forms of manipulation by malicious actors.

An attacker might download a model from popular repositories, introduce a Trojan by retraining the model, and then re-upload the compromised model. Unsuspecting users who later download and deploy this model become vulnerable to the hidden Trojan [3].

Poisoned Dataset Deception

Online dataset repositories, such as Kaggle, are treasure troves of information. However, they’re also potential grounds for deception. An attacker could upload a dataset laced with poisoned samples. An unsuspecting user might download this dataset, train their model without detecting the malicious samples, and then release a Trojan-infected model, unaware of its hidden dangers [4].

Machine Learning as a Service (MLaaS) Exploitation

Many organizations rely on external Machine Learning as a Service (MLaaS) platforms for training their models. In this setup, there’s a risk that either the MLaaS provider, malicious insider or a malicious hacker could interfere with the training or fine-tuning processes, embedding a Trojan within the model. The unsuspecting organization, focusing primarily on metrics like validation accuracy, might remain oblivious to the Trojan’s presence.

Collaborative Training Deception

Collaborative learning or federated learning [5] [6] is a machine learning technique in which the model is trained through multiple separate sessions, each using its own dataset. Multiple participants (or nodes) train on their local data and share model updates with a central server. The central server aggregates these updates to improve a global model. This is a growing approach since it enables multiple parties to build a common model while protecting the confidentiality and privacy of their own dataset. In such scenarios an adversary could introduce Trojaned updates during the training process by either hacking one of the parties, becoming one of the parties, or exploiting malicious insiders at any one of the parties. Since each party only contributes a portion of the overall training data, the malicious updates might go unnoticed, leading to a compromised global model.

Transfer Learning Activation

Transfer learning is a machine learning technique where a model developed for a particular task is reused as the starting point for a model on a second task. An organization might download a pre-trained model, not knowing it already contains a Trojan. When they employ transfer learning techniques to adapt the model and freeze its pre-trained layers, they inadvertently activate the latent Trojan [7].

Model Fine-tuning Exploits

An organization might use public datasets to fine-tune a model for specific tasks. If an adversary has access to these datasets and introduces subtle Trojan triggers, the organization might inadvertently activate these triggers during the fine-tuning process.

Compromised Model Update Mechanisms

For models that receive periodic updates (e.g., online learning models), an attacker could target the update mechanism. By injecting malicious updates or Trojaned weights, they can subtly alter the model’s behavior over time.

Trojaned Model Compression

Model compression techniques, like quantization or pruning, are used to reduce the size of models for deployment on edge devices. An adversary could introduce Trojans during the compression process, ensuring that the smaller, compressed model contains the malicious behaviour.

Synthetic Data Injection

In scenarios where models are trained on synthetic or augmented data, an attacker could introduce Trojan patterns into the data generation process. Since synthetic data is algorithmically generated, it might be harder to detect and filter out these malicious patterns.

Defensive Measures

Prevention

One of the most effective ways to prevent Trojan attacks in neural networks is through secure data collection and preprocessing. Ensuring that the data used to train the neural network is clean, well-curated, and sourced from reputable places can go a long way in reducing the risk of introducing Trojan-infected data into the learning algorithm.

Input Preprocessing

Before feeding any input to the model, it can be beneficial to preprocess it to remove potential Trojan triggers. Techniques such as input whitelisting [6], where only approved inputs are processed, or input sanitization, where suspicious patterns are removed, can be effective.

Model Inspection

Inspecting the model’s architecture and weights can sometimes reveal anomalies indicative of a Trojan [8]. Techniques like weight clustering or neuron activation analysis can highlight unusual patterns or dependencies that shouldn’t exist in a clean model.

A study by Chen et al. (2019) titled “DeepInspect: A Black-box Trojan Detection and Mitigation Framework for Deep Neural Networks” introduces a method for inspecting DNN models to detect Trojans [8]. The authors propose a systematic framework that leverages multiple inspection techniques to identify and mitigate potential Trojan attacks

Regularization Techniques

Regularization techniques are methods used in machine learning to prevent overfitting, which occurs when a model learns the training data too closely, including its noise and outliers. By adding a penalty to the loss function, regularization ensures that the model remains generalizable to unseen data. In the context of neural Trojan attacks,regularization methods, like dropout, L1/L2 regularization, noise injection, early stopping, can be used during training to prevent the model from fitting too closely to the training data, which might contain Trojan triggers.

Data Augmentation

Data augmentation is a widely-used technique in machine learning, especially in deep learning, to artificially expand the size of training datasets by applying various transformations to the original data. The primary goal of data augmentation is to make models more robust and improve their generalization capabilities. Augmenting the training data in various ways can help the model generalize better and reduce its susceptibility to Trojans [3]. Techniques like random cropping, rotation, or color jitter can be effective.

Detection

Detecting a Trojan attack is often like finding a needle in a haystack, given the complexity of neural networks. However, specialized methods are being developed to identify these threats.

Anomaly Detection

Anomaly detection, also known as outlier detection, is a technique used to identify patterns in data that do not conform to expected behaviour. By continuously monitoring the behaviour of a deployed model, anomaly detection can identify when the model starts producing unexpected outputs. If a neural Trojan is activated, it might cause the model to behave anomalously, triggering an alert.

Neural Cleanse

Neural Cleanse is a defense mechanism that identifies potential backdoor triggers in a model and then neutralizes them. It works by analyzing the model’s outputs to identify patterns that are abnormally influential in the decision-making process.

Reverse Engineering

Reverse engineering refers to the process of analyzing a trained model to understand its internal workings, structures, and decision-making processes. Techniques like LIME or SHAP can be used to interpret the model’s decisions. By dissecting a trained model layer by layer, reverse engineering could identify unusual patterns or anomalies in the weights and activations. These anomalies can be indicative of a neural Trojan. Reverse engineering a trigger could help us understand which which neurons are activated by the trigger. That could help us build proactive filters.

External Validation

External validation refers to the process of evaluating a trained model’s performance on a trusted dataset that it has never seen before. This dataset is entirely separate from the training and internal validation datasets. Since the external validation dataset is independent of the training data, it provides an unbiased evaluation of the model’s performance. Any significant deviation in performance on this dataset compared to the training or internal validation datasets can indicate the presence of a Trojan.

Mitigation

Detecting a neural Trojan is only the first step. Effective mitigation and response techniques are essential to ensure the safety and reliability of AI systems. Some machine learning models are being designed to be more resilient to Trojan attacks, capable of identifying and nullifying malicious triggers within themselves. For others, some of the techniques below are required to help restore model integrity.

Filter for Adversarial Input

If we were able to reverse engineer the trigger, we could develop filters to block the specific inputs (triggers) that activate the trojan, preventing the malicious behaviour from manifesting. [10]

Patching via Unlearning

Another potential approach that assumes we were able to reverse engineer the trigger, is to train the model to unlearn the trigger. [10]

Model Re-training

If a model is suspected to be Trojaned, one of the most straightforward defenses is to retrain it from scratch using a verified clean dataset, effectively “overwriting” the malicious patterns introduced by the Trojan. Depending on the severity of the Trojan attack and the size of the dataset, this could involve fine-tuning the model or a complete re-training from scratch.

Patching via Fine-pruning

Fine-pruning is a combination of pruning and fine-tuning defences [9]. Pruning defence reduces the size of the backdoored network by eliminating neurons that are dormant on clean inputs, consequently disabling backdoor behaviour. However, this defence is easy to evade by concentrating the clean and backdoor behaviour onto the same set of neurons. Fine-tuning is a small amount of local retraining on a clean training dataset which can somewhat reduce Trojan risk exposure.

A study by Liu et al. (2018) titled “Fine-Pruning: Defending Against Backdooring Attacks on Deep Neural Networks” [9] introduces fine-pruning and demonstrates that fine-pruning can effectively neutralize backdoor attacks by removing the malicious neurons introduced by the attacker.

Fine-pruning technique relies on the removal of certain neurons or weights from a neural network to improve its efficiency without significantly compromising its performance. The idea is to identify and eliminate the redundant or less important parts of the network. In the context of neural Trojan attacks, fine pruning can be particularly effective because Trojans often introduce malicious patterns that rely on specific neurons or weights. By pruning the network, there’s a chance that the malicious components introduced by the Trojan can be removed.

Model Ensembling

Model ensembling is a technique that combines multiple machine learning models to achieve better predictive performance than any of the individual models alone. In the context of neural Trojan attacks, using an ensemble of models can help in mitigating the impact of Trojans. If one model in the ensemble is compromised, the collective decision of the ensemble might still be correct.

By incorporating prevention, detection, and mitigation strategies, we can build a more robust defence against Trojan attacks, ensuring that neural networks continue to be a force for good rather than a vulnerable point of exploitation.

Future Outlook

As neural networks become increasingly integral to various aspects of society, research into defending against Trojan attacks has become a burgeoning field. Academia and industry are collaborating on innovative techniques to secure these complex systems, from designing architectures resistant to Trojan attacks to developing advanced detection algorithms that leverage artificial intelligence itself. Companies are investing in in-house cybersecurity teams focused on machine learning, while governments are ramping up initiatives to set security standards and fund research in this critical area. By prioritizing this issue now, the aim is to stay one step ahead of attackers and ensure that as neural networks evolve, their security mechanisms evolve in tandem.

Recent Research on Trojan Attacks in Neural Networks

The field of cybersecurity has seen considerable advances in understanding the vulnerability of neural networks to Trojan attacks. One seminal work in the area [11] investigates the intricacies of incorporating hidden Trojan models directly into neural networks. In a similar vein, [12] provides a comprehensive framework for defending against such covert attacks, shedding light on how network architectures can be modified for greater resilience. Adding a different perspective, a study [13] delves into attacks that utilize clean, unmodified data for deceptive purposes and offers countermeasures to defend against them. In an effort to automate the detection of Trojans, the research [9] proposes methods for identifying maliciously trained models through anomaly detection techniques. Meanwhile, both corporate and governmental bodies are heavily drawing from another impactful paper [14] to standardize security measures across various applications of neural networks. These studies collectively signify a strong commitment from the academic and industrial communities to make neural networks more secure and robust against Trojan threats.

Conclusion

As neural networks continue to permeate every facet of modern life, from healthcare and transportation to personal assistance and financial systems, the urgency to secure these advanced computing models against Trojan attacks has never been greater. Research is making strides in detecting and mitigating these vulnerabilities, and collaborative efforts between academia, industry, and government are essential for staying ahead of increasingly sophisticated threats. While the road to entirely secure neural networks may be long and filled with challenges, the ongoing work in the field offers a promising outlook for creating more resilient systems that can benefit society without compromising security.

References

- Liu, Y., Ma, S., Aafer, Y., Lee, W. C., Zhai, J., Wang, W., & Zhang, X. (2018). Trojaning Attack on Neural Networks

- Eykholt, K., Evtimov, I., Fernandes, E., Li, B., Rahmati, A., Xiao, C., Prakash, A., Kohno, T., & Song, D. (2018). Robust physical-world attacks on Deep Learning Visual Classification. 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition. https://doi.org/10.1109/cvpr.2018.00175

- Gu, T., Dolan-Gavitt, B., & Garg, S. (2017). BadNets: Identifying Vulnerabilities in the Machine Learning Model Supply Chain. arXiv preprint arXiv:1708.06733

- Shafahi, A., Huang, W. R., Najibi, M., Suciu, O., Studer, C., Dumitras, T., & Goldstein, T. (2018). Poison Frogs! Targeted Clean-Label Poisoning Attacks on Neural Networks

- Bagdasaryan, E., & Shmatikov, V. (2020). Threats to Federated Learning: A Survey. arXiv preprint arXiv:2003.02133

- Kairouz, P., McMahan, H. B., Avent, B., Bellet, A., Bennis, M., Bhagoji, A. N., … & D’Oliveira, R. G. L. (2019). Advances and Open Problems in Federated Learning. arXiv preprint arXiv:1912.04977

- Yosinski, J., Clune, J., Bengio, Y., & Lipson, H. (2014). How transferable are features in deep neural networks? arXiv preprint arXiv:1411.1792v1

- Chen, J., Zhang, Z., Chen, Y., Yi, J., & Heng, C. H. (2019). DeepInspect: A Black-box Trojan Detection and Mitigation Framework for Deep Neural Networks. In Proceedings of the 28th International Joint Conference on Artificial Intelligence.

- Liu, Y., Ma, S., Aafer, Y., Lee, W. C., Zhai, J., Wang, W., & Zhang, X. (2018). Fine-Pruning: Defending Against Backdooring Attacks on Deep Neural Networks. arXiv preprint arXiv:1805.12185v1

- Wang, B., Yao, Y., Shan, S., Li, H., Viswanath, B., Zheng, H., & Zhao, B. Y. (2019). Neural cleanse: Identifying and mitigating backdoor attacks in Neural Networks. 2019 IEEE Symposium on Security and Privacy (SP). https://doi.org/10.1109/sp.2019.00031

- Guo, C., Wu, R., & Weinberger, K. Q. (2020). On hiding neural networks inside neural networks. arXiv preprint arXiv:2002.10078.

- Xu, K., Liu, S., Chen, P. Y., Zhao, P., & Lin, X. (2020). Defending against backdoor attack on deep neural networks. arXiv preprint arXiv:2002.12162.

- Chen, Y., Gong, X., Wang, Q., Di, X., & Huang, H. (2020). Backdoor attacks and defenses for deep neural networks in outsourced cloud environments. IEEE Network, 34(5), 141-147.

- Zhou, S., Liu, C., Ye, D., Zhu, T., Zhou, W., & Yu, P. S. (2022). Adversarial attacks and defenses in deep learning: From a perspective of cybersecurity. ACM Computing Surveys, 55(8), 1-39.

For 30+ years, I've been committed to protecting people, businesses, and the environment from the physical harm caused by cyber-kinetic threats, blending cybersecurity strategies and resilience and safety measures. Lately, my worries have grown due to the rapid, complex advancements in Artificial Intelligence (AI). Having observed AI's progression for two decades and penned a book on its future, I see it as a unique and escalating threat, especially when applied to military systems, disinformation, or integrated into critical infrastructure like 5G networks or smart grids. More about me, and about Defence.AI.

Luka Ivezic

Luka Ivezic is the Lead Cybersecurity Consultant for Europe at the Information Security Forum (ISF), a leading global, independent, and not-for-profit organisation dedicated to cybersecurity and risk management. Before joining ISF, Luka served as a cybersecurity consultant and manager at PwC and Deloitte. His journey in the field began as an independent researcher focused on cyber and geopolitical implications of emerging technologies such as AI, IoT, 5G. He co-authored with Marin the book "The Future of Leadership in the Age of AI". Luka holds a Master's degree from King's College London's Department of War Studies, where he specialized in the disinformation risks posed by AI.