The Unseen Dangers of GAN Poisoning in AI

Table of Contents

Introduction

The AI hype wave currently sweeping the worls was primarily caused by advancements in Generative Adversarial Networks (GANs). GANs have become a cornerstone in driving innovations in data generation, image synthesis, and content creation. However, these neural network architectures are not impervious to cyber vulnerabilities; one emerging and largely overlooked threat is GAN Poisoning. Unlike traditional cyber-attacks that target data integrity or access controls, GAN Poisoning subtly manipulates the training data or alters the GAN model itself to produce misleading or malicious outputs.

What Are GANs?

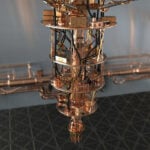

Generative Adversarial Networks, commonly referred to as GANs, are a class of AI models within the broader domain of machine learning. Initially proposed by Ian Goodfellow and his colleagues in 2014, GANs consist of two neural networks, the Generator and the Discriminator, that are trained concurrently through a kind of game-theoretic framework. The Generator aims to produce data that resemble a given dataset, while the Discriminator’s role is to distinguish between data generated by the Generator and real data from the dataset. The training process involves an iterative “cat and mouse” game in which the Generator continuously improves its ability to generate convincing data, and the Discriminator enhances its ability to distinguish fake from real. This adversarial process eventually leads to the Generator creating data that are virtually indistinguishable from real data.

One of the most striking attributes of GANs is their wide range of applications. These include, but are not limited to, data augmentation, image-to-image translation, super-resolution, style transfer, and even more complex tasks like drug discovery and simulation of physical systems. For instance, GANs are extensively used in the entertainment industry for creating lifelike CGI characters. They’re also deployed in the medical field for generating synthetic medical images for training purposes, filling the gap where real data are often scarce or sensitive.

The industrial significance of GANs cannot be overstated. As businesses and research institutions seek to derive actionable insights from increasingly complex data, the ability to generate high-quality synthetic data has become invaluable. GANs not only offer a solution to the problem of limited or incomplete data but also hold the potential to revolutionize industries by driving innovations that were previously deemed impractical or resource-intensive. Their role is increasingly recognized as critical in accelerating research and development cycles, thereby offering a competitive edge in various sectors. However, as with any technology, the rise of GANs brings forth its own set of challenges and vulnerabilities, one of which is the threat of GAN Poisoning.

What is GAN Poisoning?

GAN Poisoning is a unique form of adversarial attack aimed at manipulating Generative Adversarial Networks (GANs) during their training phase; unlike traditional cybersecurity threats like data poisoning or adversarial input attacks, which either corrupt training data or trick already-trained models, GAN Poisoning focuses on altering the GAN’s generative capability to produce deceptive or harmful outputs. The objective is not merely unauthorized access but the generation of misleading or damaging information.

The mechanics of a GAN Poisoning attack target either the training data used by the Discriminator or the model parameters of the Generator and Discriminator themselves. By introducing subtle biases into the training data or tampering directly with the model’s architecture, an attacker can compel a GAN to generate faulty outputs. These attacks are particularly insidious because they influence the “cat and mouse” training game between the Generator and Discriminator, leading to deeply embedded vulnerabilities that are difficult to detect and rectify.

The Unseen Risks of GAN Poisoning

One of the most unnerving aspects of GAN Poisoning is its elusive nature; these attacks are notoriously difficult to detect. The reason lies in the very architecture of GANs, which are designed to improve iteratively until the Generator is so good at creating data that even the Discriminator cannot distinguish them from real inputs. In a poisoned GAN, this process still occurs, but it does so under the influence of the attacker’s manipulations. The symptoms aren’t always immediate or obvious, as the generated data or images may appear legitimate at first glance. To the untrained eye, and often even to experts, spotting the discrepancies can be like finding a needle in a haystack.

The real-world implications of undetected GAN Poisoning are enormous and wide-ranging. If applied to disinformation, a poisoned GAN could generate realistic but entirely false news articles, deepfake videos, or fraudulent academic research. Financially, poisoned GANs could be used to simulate market trends or financial data, misleading investors and potentially causing market instability. From a defence perspective, imagine a GAN trained to generate satellite images being poisoned to omit or falsely represent military installations. These are not just theoretical risks; several case studies have emerged that demonstrate the potential severity of these attacks. For example, researchers have showcased how GANs can be manipulated to generate medical images with false positives or negatives, leading to erroneous clinical decisions.

Methods of Detection and Prevention

Addressing the issue of GAN Poisoning requires a multi-pronged approach that includes detection, prevention, and continual research for improvement. When it comes to detection, several state-of-the-art methods have emerged that aim to identify irregularities either in the training data or within the trained GAN model. Techniques such as statistical anomaly detection can be employed to identify unexpected patterns in the generated data, serving as a flag for potential poisoning. Another approach is adversarial training, wherein the GAN is trained with both genuine and poisoned data, teaching it to recognize and resist poisoning attempts.

As for prevention, one best practice is to maintain a robust data verification and validation pipeline. Using only verified, high-quality datasets can minimize the risks of introducing poisoned data. Organizations are also turning to secure multi-party computation techniques to ensure that the GAN training process is secure and that no single entity can manipulate it. Besides, leveraging model explainability to interpret GAN decisions can provide an additional layer of scrutiny, helping to identify inconsistencies that may point to poisoning.

Ongoing research in this area is dynamic, encompassing both technological and ethical considerations. For example, researchers are exploring federated learning as a way to decentralize the training data, making it more challenging for an attacker to poison a GAN. Ethical guidelines are also being developed to address the responsibility of AI practitioners in preventing and detecting GAN Poisoning. Given the evolving nature of this threat, continuous research and collaboration among academia, industry, and policymakers are essential to keep pace with attackers and to develop more effective countermeasures.

By incorporating these methods and adhering to best practices, it’s possible to build more resilient GANs. However, as is the case with any cybersecurity measures, it’s a constant game of cat and mouse. The key is to stay ahead of the curve through ongoing vigilance, research, and technological advancement.

The Broader Implications

The risks associated with GAN Poisoning have far-reaching implications that extend beyond the technology itself, touching on the future of AI, cybersecurity, and ethical governance. As GANs become more embedded in critical systems, healthcare, finance, and national security, to name a few, the urgency to address the poisoning risk scales in parallel.

From an ethical standpoint, the emergence of GAN Poisoning introduces questions about responsibility and accountability. Who is responsible when a poisoned GAN produces misleading medical diagnoses or fraudulent financial data? As highlighted by a study, establishing ethical guidelines specific to GAN technology is becoming essential (“Generative adversarial networks and synthetic patient data: current challenges and future perspectives“). The study argues for a holistic approach that includes not just technological solutions but ethical frameworks that guide AI development and deployment.

Moreover, the issue raises concerns about the democratization of AI. While GANs offer a multitude of beneficial applications, their susceptibility to poisoning can be seen as a form of ‘technological inequality.’ Those with the expertise to manipulate these networks can wield undue influence, as discussed in the paper “Poisoning Attack in Federated Learning using Generative Adversarial Nets.”

Given these broader implications, it is clear that addressing GAN Poisoning isn’t just a technical challenge but a societal one, requiring interdisciplinary efforts involving technologists, ethicists, policymakers, and legal experts. Future directions in this research area are increasingly focusing on the co-development of technological and ethical strategies, aiming for a secure and responsible AI ecosystem.

Conclusion

The emerging threat of GAN Poisoning casts a shadow over recent AI advancements, presenting a unique set of cybersecurity challenges that are both elusive to detect and devastating in impact. The risks extend from producing misleading information to posing national security threats, with ethical implications that raise questions about responsibility and the equitable use of technology. Addressing these vulnerabilities requires an interdisciplinary approach that combines state-of-the-art detection methods, ethical guidelines, and continual research.

References

- Arora, A., & Arora, A. (2022). Generative adversarial networks and synthetic patient data: current challenges and future perspectives. Future Healthcare Journal, 9(2), 190.

- Vuppalapati, C. (2021). Democratization of Artificial Intelligence for the Future of Humanity. CRC Press.

- Zhang, J., Chen, J., Wu, D., Chen, B., & Yu, S. (2019, August). Poisoning attack in federated learning using generative adversarial nets. In 2019 18th IEEE international conference on trust, security and privacy in computing and communications/13th IEEE international conference on big data science and engineering (TrustCom/BigDataSE) (pp. 374-380). IEEE.

For 30+ years, I've been committed to protecting people, businesses, and the environment from the physical harm caused by cyber-kinetic threats, blending cybersecurity strategies and resilience and safety measures. Lately, my worries have grown due to the rapid, complex advancements in Artificial Intelligence (AI). Having observed AI's progression for two decades and penned a book on its future, I see it as a unique and escalating threat, especially when applied to military systems, disinformation, or integrated into critical infrastructure like 5G networks or smart grids. More about me, and about Defence.AI.